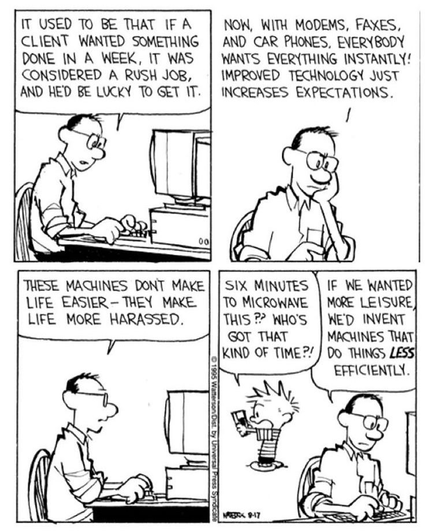

When I was studying CS (and music) I took one single philosophy class, in Ethics. But it was offered by the philosophy department to philosophy majors,so it wasn't what I think most people mean when they say programmers should study ethics.

We had two class meetings per week. In the first class meeting, the professor would tell us about a system of ethics. Who came up with it and why. How it solved problems. And we could ask questions about what seemed to be shortcomings and he would give us the answers developed by people working on that system. It was finally the answer to all of our conundrums.

Then in the second session, he would tear it to shreds. He would raise a problem with it, maybe a problem we had raised, and show how the answer given was actually a tautology or logically confused or wrong in some other way. This system did not solve ethics and was in fact an incoherent mess!

The last week of the term, he got into the system popular now with tech oligarchs. They do actually have a system of ethics! (Which I don't recall the name of.) And boy, was it obviously a mess of scientific racism.

All during the term, I would get excited during the intro week and try to find holes. But this one was so obviously going to be eviscerated on Thursday, I didn't even try to point out how it was full of shit. I was llokinf forward to the coming destruction.

Thursday was the course review for the paper or exam or whatever. He let the last one stand.

At the time I thought he might actually be endorsing it and was upset. Later, I thought maybe because it was current rather than historical, counter arguments hadn't solidified.

Only much later did I realise that he had given us the tools to rip it apart ourselves. Indeed, it was the weakest and most poorly constructed of all the systems and we were certainly up to tearing it down.

So when I say CS students should take ethics, I mean, they should take a class like that, where they aren't left with a perfect framework to apply, but the tools to critique frameworks they encounter. They need to be able to spot bullshit. Right now, they are way too credulous of bullshit.

Edit: Effective altruism didn't exist yet. It was the racism stuff left as an exercise.

is a Green

is a Green