- 0 Followers

- 3 Following

- 5 Posts

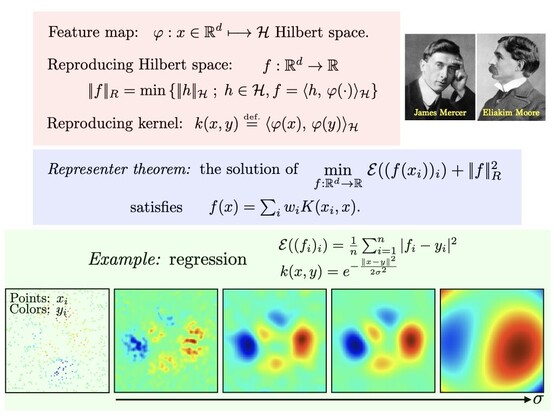

Reproducing Kernel Hilbert spaces define norms on functions so that solutions of regularized fitting problems are linear sum of kernel functions. Defines non-parametric learning methods (complexity scales with input). https://en.wikipedia.org/wiki/Reproducing_kernel_Hilbert_space

RT @marc_lelarge

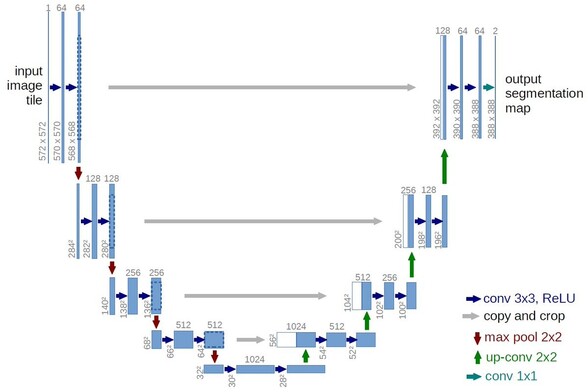

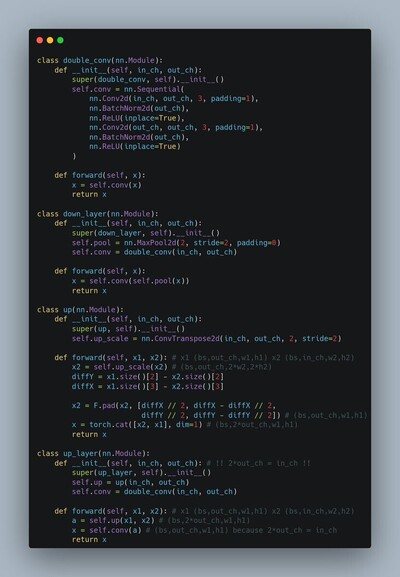

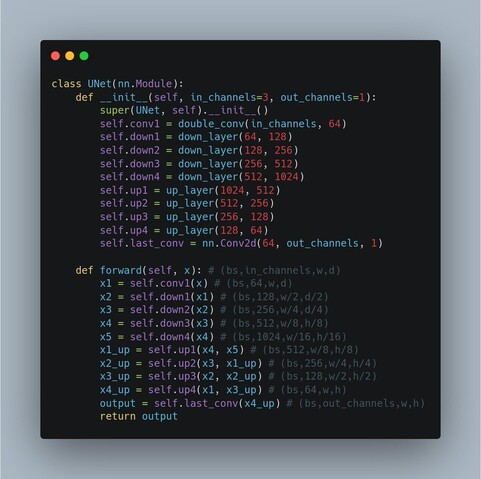

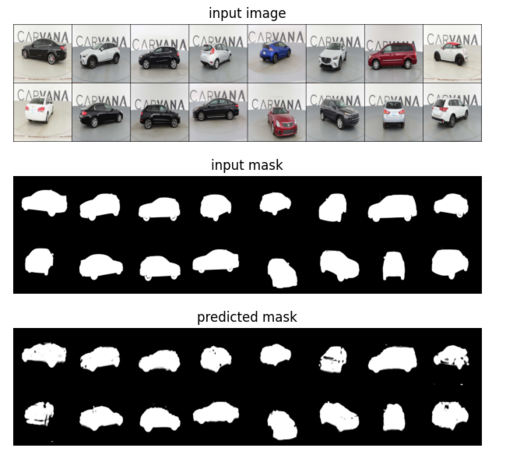

𝗨-𝗡𝗲𝘁, a convolutional neural network first developed for image segmentation, is now a building block for 𝗦𝘁𝗮𝗯𝗹𝗲 𝗗𝗶𝗳𝗳𝘂𝘀𝗶𝗼𝗻.

Here is a simple implementation with max-pooling for down layers and transposed convolutions for up layers (notebook link in alt-text)

𝗨-𝗡𝗲𝘁, a convolutional neural network first developed for image segmentation, is now a building block for 𝗦𝘁𝗮𝗯𝗹𝗲 𝗗𝗶𝗳𝗳𝘂𝘀𝗶𝗼𝗻.

Here is a simple implementation with max-pooling for down layers and transposed convolutions for up layers (notebook link in alt-text)

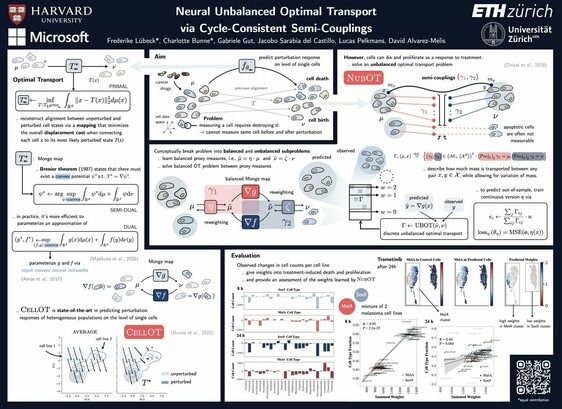

RT @_bunnech

Excited about neural #optimaltransport methods for modeling #singlecell perturbation responses but wondering how to integrate cell birth and death? @FrederikeLubeck has the answer for you in the #AI4science workshop @NeuripsConf! Join us in rooms 388 - 390.

Excited about neural #optimaltransport methods for modeling #singlecell perturbation responses but wondering how to integrate cell birth and death? @FrederikeLubeck has the answer for you in the #AI4science workshop @NeuripsConf! Join us in rooms 388 - 390.

RT @LenaicChizat

For smooth convex optim on the n-simplex, mirror descent achieves O(log(n)/t) convergence rate while gradient descent (ISTA) achieves O(n/t) (same rates).

What about n=∞? Say on the space of probability distributions on a d-manifold ?

→ Answer in https://arxiv.org/abs/2105.08368

For smooth convex optim on the n-simplex, mirror descent achieves O(log(n)/t) convergence rate while gradient descent (ISTA) achieves O(n/t) (same rates).

What about n=∞? Say on the space of probability distributions on a d-manifold ?

→ Answer in https://arxiv.org/abs/2105.08368

Convergence Rates of Gradient Methods for Convex Optimization in the Space of Measures

We study the convergence rate of Bregman gradient methods for convex optimization in the space of measures on a $d$-dimensional manifold. Under basic regularity assumptions, we show that the suboptimality gap at iteration $k$ is in $O(log(k)k^{--1})$ for multiplicative updates, while it is in $O(k^{--q/(d+q)})$ for additive updates for some $q \in {1, 2, 4}$ determined by the structure of the objective function. Our flexible proof strategy, based on approximation arguments, allows to painlessly cover all Bregman Proximal Gradient Methods (PGM) and their acceleration (APGM) under various geometries such as the hyperbolic entropy and $L^p$ divergences. We also prove the tightness of our analysis with matching lower bounds and confirm the theoretical results with numerical experiments on low dimensional problems. Note that all these optimization methods must additionally pay the computational cost of discretization, which can be exponential in $d$.