| Website | https://www.geoffcain.com/ |

Geoff Cain

- 340 Followers

- 616 Following

- 276 Posts

In Episode #58 of Simon Says: Educate!, Geoff Cain interviews Dr. Natasha Maria Gomes. Natasha shares her journey from French instructor to open education advocate, and how her work combines arts-based learning with STEM and social-emotional learning (SEL). #OER #STEAM #OE4BW https://open.spotify.com/episode/5Cr2yTKy7TGdqeX0FwpA7G?si=o1dX8Ev2RIeB9-seEju11w&nd=1&dlsi=d0555d1557b444e1

Dare to Be Random!

Podcast · Geoff Cain · This podcast is meant to hack the chthonic liminal mind to unleash a guaranteed increase in productivity by 10.29%. This exercise in digital surrealist archeology will transform how you look at technology and yourself. D2BR is a sandbox for AI performance art and controlled chaos that celebrates the deep aesthetics of the random!

Esteemed Colleagues,

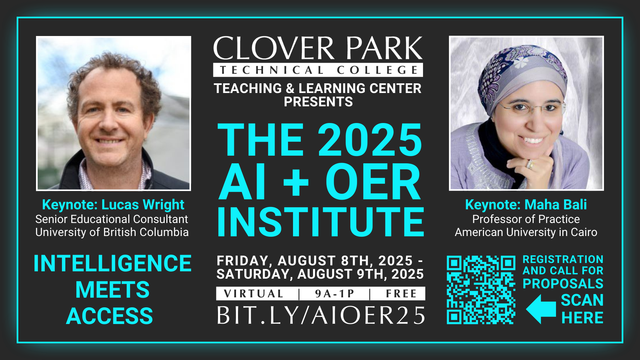

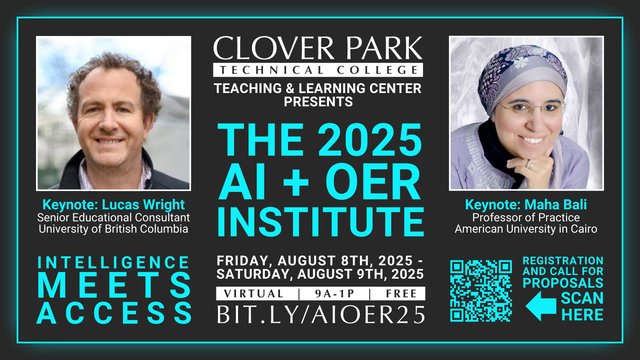

We have a lot of great proposals submitted for the AI+OER Institute but we have room for a few more and our deadline is coming up: June 16th!

Clover Park Technical College’s Teaching & Learning Center invites you to submit a proposal for the 2025 AI + OER Institute, a free, virtual event (yes, truly open) on:

Friday, August 8th, and Saturday, August 9th, 2025.

Call for proposals form & event registration is here:

https://cptc.libguides.com/TLC/2025-CPTC-AI-OER-Institute

"I believe that when both educators and learners improve their critical AI literacy, they will be better able to resist using AI in ways that are harmful or inappropriate. Instead, they will feel empowered to use it constructively, while being aware of the limitations."

-Maha Bali, 2024 (Where are the crescents in AI? | LSE Higher Education) https://blogs.lse.ac.uk/highereducation/2024/02/26/where-are-the-crescents-in-ai

Share Your Expertise at Clover Park Technical College’s 2025 AI + OER Institute! #AI #OER

https://geoffcain.com/blog/clover-park-technical-colleges-2025-ai-oer-institute/

Tree of Thoughts: Deliberate Problem Solving with Large Language Models

Language models are increasingly being deployed for general problem solving across a wide range of tasks, but are still confined to token-level, left-to-right decision-making processes during inference. This means they can fall short in tasks that require exploration, strategic lookahead, or where initial decisions play a pivotal role. To surmount these challenges, we introduce a new framework for language model inference, Tree of Thoughts (ToT), which generalizes over the popular Chain of Thought approach to prompting language models, and enables exploration over coherent units of text (thoughts) that serve as intermediate steps toward problem solving. ToT allows LMs to perform deliberate decision making by considering multiple different reasoning paths and self-evaluating choices to decide the next course of action, as well as looking ahead or backtracking when necessary to make global choices. Our experiments show that ToT significantly enhances language models' problem-solving abilities on three novel tasks requiring non-trivial planning or search: Game of 24, Creative Writing, and Mini Crosswords. For instance, in Game of 24, while GPT-4 with chain-of-thought prompting only solved 4% of tasks, our method achieved a success rate of 74%. Code repo with all prompts: https://github.com/princeton-nlp/tree-of-thought-llm.