| site | https://willgallego.com |

Will Gallego

- 396 Followers

- 161 Following

- 1.6K Posts

Apropos of nothing: do we have any written experience reports of what it’s *like* as an engineer to be on call for various nines of availability?

I know the math for them, but I realised I don’t know if we have a collective idea of what it FEELS like to operate each of at those levels

Anyone? 👀

I would love:

- what were your SLAs?

- how did it change how you planned your life? Performing activities?

- what was your wellbeing like?

- did the company culture help or hurt your wellbeing?

Please share! I’m very curious and it’s extremely relevant right now, I think

Small Town Titans - "You're A Mean One, Mr. Grinch" - Official Video

Had the fun of being a guest on the This is Fine! podcast last week.

Hot take - I don't think you ever *really* put an incident "on hold", not fully. Does that still have value?

Give it a listen!

youtu.be/qh0Wy3uB0yw

Hey, we're going to play some D&D!!

The party is battling The Old Man of the Forest - the Metsavana - a monstrous corpse eating creature keeping them trapped within this small oasis within the dark woods.

Come hang out! www.twitch.tv/wcgallego/

[Incident Fest] - What Does Great Incident Response Look Like?

We want folks running better incident reviews, but you basically need to get lucky enough to be at a company where people are already doing that.

Otherwise, how else would you even observe that?

New blog post: "Big Enough to Fail".

I think some services/companies have grown so big that blame actually goes *down* during large scale outages.

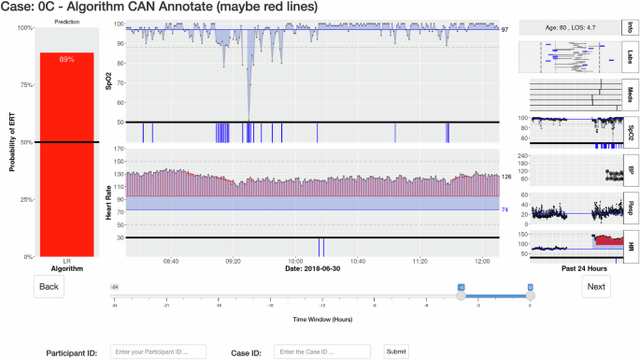

"Empirically derived evaluation requirements for responsible deployments of AI in safety-critical settings"

Empirically derived evaluation requirements for responsible deployments of AI in safety-critical settings - npj Digital Medicine

Processes to assure the safe, effective, and responsible deployment of artificial intelligence (AI) in safety-critical settings are urgently needed. Here we show a procedure to empirically evaluate the impacts of AI augmentation as a basis for responsible deployment. We evaluated three augmentative AI technologies nurses used to recognize imminent patient emergencies, including combinations of AI recommendations and explanations. The evaluation involved 450 nursing students and 12 licensed nurses assessing 10 historical patient cases. With each technology, nurses’ performance was both improved and degraded when the AI algorithm was most correct and misleading, respectively. Our findings caution that AI capabilities alone do not guarantee a safe and effective joint human-AI system. We propose two minimum requirements for evaluating AI in safety-critical settings: (1) empirically measure the performance of people and AI together and (2) examine a range of challenging cases which produce a range of strong, mediocre, and poor AI performance.

Hey, in ~1 hour at 8:30pm ET / 5:30pm PT, we're going to play some D&D! With @crayzeigh @renice @alephnaught2tog and @reinh

They've got some undead bounty to hunt down in the Deep Wood. But what's powering these supernatural monstrosities..? Tune in!

twitch.tv/wcgallego