| GitHub | https://github.com/gabe-sky |

| @gabe_sky | |

| Homepage | https://www.gabe-sky.com/ |

Gabe Schuyler

- 379 Followers

- 234 Following

- 389 Posts

Language and AI by day, relentless tinkering and whimsy by night. I fix things.

Spellcheckers? Who thought it was a good idea that you have to type in a word, to find out how to spell it ?

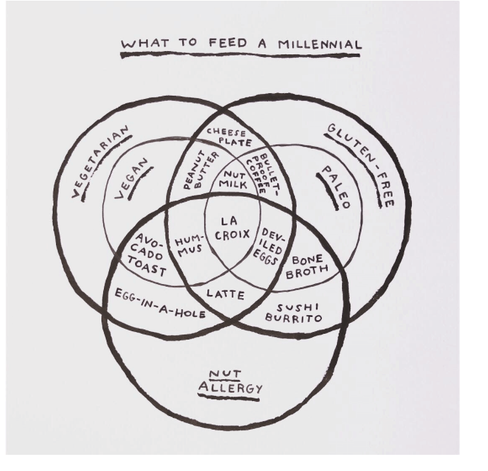

What to feed a millennial

paint drop loop

RE: https://mastodon.social/@404mediaco/116245824523367005

Team "RNA World" scores another point. What if the stuff is everywhere?

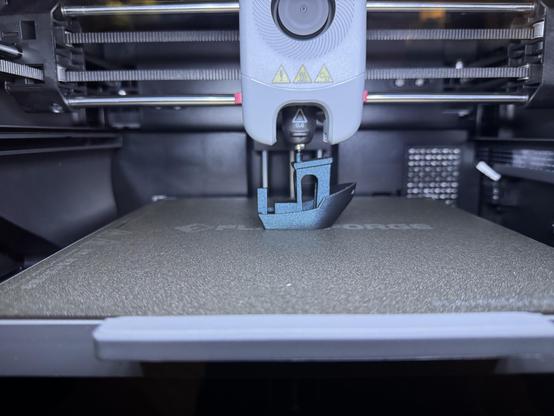

Trying out a Flashforge Adventurer 5M Pro 3-D printer and wow have things gotten speedier since I was last in the space. (And affordable.)

Remember fractals? I thought they might inspire me to do something abstract on the letterpress tomorrow. But then making an interactive fractal explorer became today's project. Tossed in the digital junk drawer here: https://someawesome.name/fractal/

I just beat the Guinness world record for speed-picking by 4 seconds!

Single-pin-picking, 8 differently-keyed, 4-pin, standard¹ padlocks, in 56 seconds.

And I did it while wearing a fluffy bear suit.

¹ the current record holder used laminated Master locks with no security pins, but I didn't want all the comments on my video to be "Master lock sucks" jokes, so I used Brinks instead.

New month, new Tiny Horoscopes #zine to meticulously fold copies of. Folks seem to like them, so I'll probably keep making them for a while. Also posted to the companion site: https://www.tinyhoroscopes.com/

*This behavior has rather a "galactic panspermia" vibe

So, how about that weather?