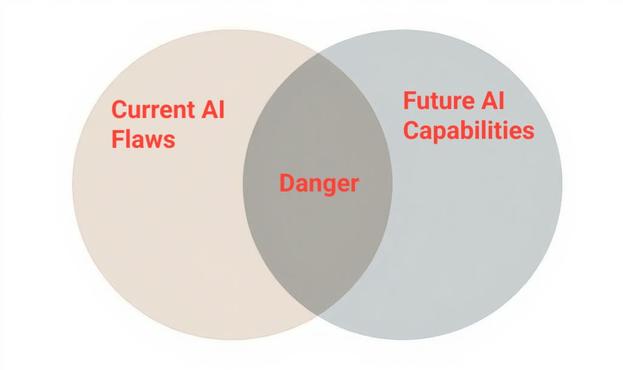

🤖 Could your AI tool suddenly "wake up" and turn against you? According to the Distinct Independent Architecture Hypothesis, the answer is a firm.

This theory proposes that AI exists in two fundamentally separate "lanes" with distinct architectures that prevent them from switching between the two.