@devbirgit

- 6 Followers

- 4 Following

- 40 Posts

So AI needs to create insightful information out of the data base that does not harm the mentioned human rights.

It needs to be explainable, understandable, with all the conformity assessment properties mentioned.

With human-oversight.

A decision is also then a decision

- if you get a sole suggestion

and

- if you have a ranking, where a candidate is ranked first and so on.

So, what does it mean for recruiters and AI builders for recruiters?

A thumb of rule is to follow the principle that: "Machines shouldn't decide for humans." Especially in high-risk situations.

Let's get that example from the European Court of Justice:

https://www.deutschlandfunk.de/schufa-score-eugh-urteil-100.html

where the Scoring company was sued for automating decision processes.

Fun fact: Scoring is on the level "Unacceptable risk" – AIs on this level are banned.

This is the source, of the quote:

https://ec.europa.eu/commission/presscorner/detail/en/qanda_21_1683

And it basically says, you can not do AI without humans.

A 2nd quote to note:

"Before placing a high-risk AI system on the EU market or otherwise putting it into service, providers must subject it to a conformity assessment. This will allow them to demonstrate that their system complies with the mandatory requirements for trustworthy AI (e.g. data quality, documentation and traceability, transparency, human oversight, accuracy, cybersecurity and robustness). This assessment has to be repeated if the system or its purpose are substantially modified"

end quote

https://en.wikipedia.org/wiki/Artificial_Intelligence_Act

And the EU AI Act says, that #recruitment is categorized as "high-risk".

"AI applications that are expected to pose significant threats to health, safety, or the fundamental rights of persons. Notably, AI systems used in health, education, recruitment, critical infrastructure management, law enforcement or justice. They are subject to quality, transparency, human oversight and safety obligations, and in some cases require a

...

And so for this, the EU has placed two significant laws, that recruiters and companies targeting recruiters as customers.

I hope by now, that many citizens know what GDPR is. Actually, I can't trust you on this either, I have seen some digital services that simply aren't even though claiming it.

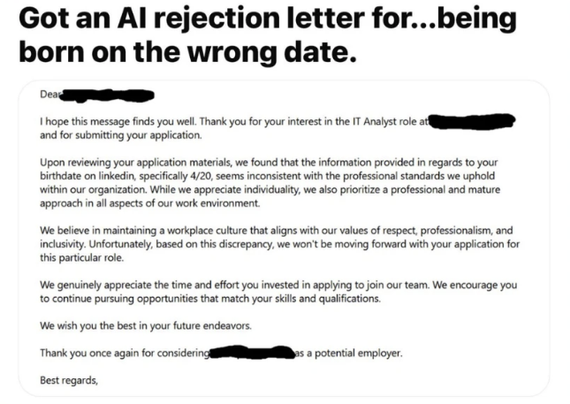

We train out bot to create standard rejections, so we can also automate that part. Also, we don't clean the data and remove personal data from training and also not from actual execution.

Meaning, the bot knows what address, or date of birth or gender has a higher chance of unprofessionalism.