On responsiveness and quality interactions, in #WebXR and beyond :

FWIW I'm not a game developer but still program VR prototypes professionally. I do that on the Web not just because I know it but rather because of the potential for, when done right, responsiveness and accessibility.

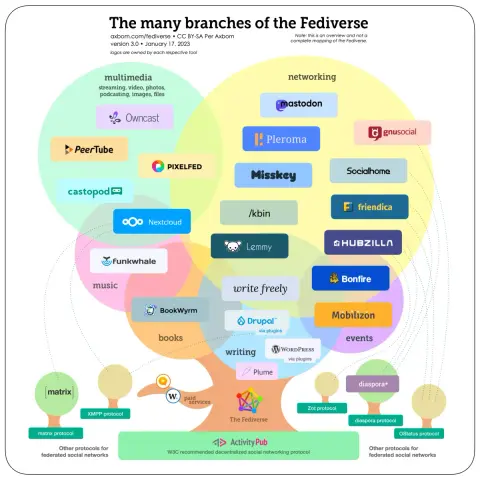

Using WebXR one makes a single Web page that works on a VR headset, including standalone like the Quest, but also on a mobile phone (using the IMU, or not) or desktop. The single same Web page. That can also include networking and federation. So... yes indeed I believe if someone wants to build something social, something to use with others, it should go beyond "just" VR.

That being said, responsiveness and accessibility, must not come at the cost of "flattening" the experience. It's not because an experience can also be done on an entry level mobile phone that roomscale and handtracking must disappear, rather one must rethink of quality interactions both per device and across device. What does it mean to manipulate a thing, e.g 3D model or even text and code snippets, while moving with hands (in VR) versus just 2 thumbs (on mobile) statically? That is the harder part, when we can find ways to both compete and collaborate while leveraging the best of interactions across totally different devices.

From https://old.reddit.com/r/virtualreality/comments/zpe3ls/vr_to_flatscreen_crossplay_is_necessary_for_vr_to/