| Blog | http://www.praetorianprefect.com |

| http://www.twitter.com/danielkennedy74 | |

| https://www.linkedin.com/in/danieltkennedy/ | |

| Publicly available research | https://blog.451alliance.com/author/dkennedy/ |

Dan Kennedy

- 450 Followers

- 140 Following

- 547 Posts

𝗦𝗲𝗰𝘂𝗿𝗶𝘁𝘆 𝗳𝗼𝗿 𝗔𝗜 𝗶𝘀 𝗰𝗿𝗲𝗮𝘁𝗶𝗻𝗴 𝗮𝗻 𝗲𝘅𝗽𝗲𝗿𝘁𝗶𝘀𝗲 𝗽𝗮𝗿𝗮𝗱𝗼𝘅

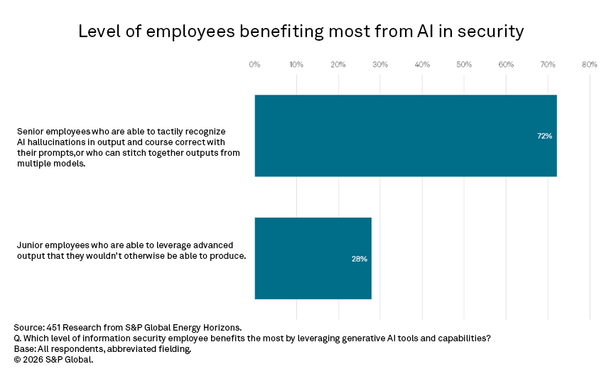

Three years ago, early generative AI integrations in security operations platforms primarily took the form of chat interfaces within their tooling ecosystem. These interfaces enabled natural language queries, incident summarization and the potential automation of routine investigative tasks. Vendors framed early use cases around the ability to uplevel junior or Tier 1 analysts in security operations centers (SOC). Several years into broader GenAI and agentic integrations, that upskilling narrative appears displaced. Security leaders now report that the primary beneficiaries of AI-assisted workflows are senior analysts rather than junior staff. About 72% of respondents to this study note that senior professionals, who recognize hallucinations in output and can course-correct in prompts, benefit most from leveraging AI integrations. Only 28% believe junior employees derive the primary benefit, generating output with AI they wouldn’t otherwise be able to produce. The implications of this are profound in security and beyond. AI may compress the labor hierarchy by automating tasks that were once performed by trained future experts.

Human intervention in AI technology continues to be necessary for optimal results. The results from our Organizational Behavior 2025 survey are not entirely unexpected: If humans will remain “in the loop” to check the results of AI, it will be seasoned experts, humans who have built up tacit knowledge through thousands of repetitions of the work that AI now performs, who will most readily differentiate correct from incorrect results. Moreover, they can offer course correction and evaluate the results of multiple models to determine the best fit for any task. Research also suggests that giving AI models more sophisticated prompts improves the likelihood or receiving comprehensive and correct results.

AI is already affecting the entry–level hiring market, raising several serious questions. If the lower rungs of career ladders are knocked out by AI taking over tasks that were formative learning opportunities for new employees, what will replace this knowledge-creation activity? Who will be the senior employees to provide the necessary human-in-the-loop functions if people do not have paths to gain that experience? Even major AI developers have begun examining this issue. Research released by Anthropic found that programmers who rely heavily on AI assistance perform significantly worse when later asked to explain or reason about the code produced. That suggests that as automation increases, engineers must retain the ability to detect errors and guide model output. This is a skill that will erode, or may never be built up in the first place, if uncritical over-reliance on AI output becomes the norm.

https://blog.451alliance.com/security-for-ai-is-creating-an-enterprise-paradox/

Next in Tech | Ep. 259: The RSAC Conference – Agents on The Loose.

@cigitalgem There’s research to suggest that some of AI’s best utility is as a reflection machine, the more sophisticated the prompt, the better the response. If we’re deciding on a moment to ‘freeze human learning in place’, I feel like that comes with some side effects (like every organic expert from before this period eventually getting old and dying if we don’t figure something else out).

Forced beige.

Maybe the students should do the assignments with the steps as intended…

At the airport:

Is this the end of the group 2 line?

“I don’t know, I’m group 5, I just get on whatever line.”

/returns to cell phone call

“So anyway, I got a full scholarship to the best MBA program in the country.”

—-

Provides some idea of how business decisions get made…

And in 'easily predictable outcomes' news, thanks again chainsaw guy, will mop person ever be making an appearance?

DOGE employee stole Social Security data and put it on a thumb drive, report says | TechCrunch

A whistleblower is accusing a former DOGE member of stealing a large number of Americans’ personal data while he was working at the Social Security Administration, with the plan of using it at his new job.

@avirr In this case, the tech executive is the one engaging with the critical aspect of fiction.

That alone should be scary.

"Uh, you guys need to rein us in. Seriously now."

@Javvad Dayum, he's right.

Also we can't afford those fonts anymore...

@krypt3ia It drew me in. The first two episodes, I felt like I was watching someone with a GoPro at a Ren Faire. But the acting, especially the character of Aegon, really landed.

I will confess I was annoyed they strayed from the books in the finale by having Egg sneak off again. Having Maekar, after unintentionally killing his brother and acknowledging the failures in raising his other sons, agree that Aegon could be squire to a hedge knight, and all that entailed, was an important plot point. Now it's a two buddies on the run type thing, instead of a conscious decision.

Plus whenever they stray from the source material in GoT, it gets wacky (even when they have to).