| Blog | http://www.praetorianprefect.com |

| http://www.twitter.com/danielkennedy74 | |

| https://www.linkedin.com/in/danieltkennedy/ | |

| Publicly available research | https://blog.451alliance.com/author/dkennedy/ |

Dan Kennedy

- 454 Followers

- 141 Following

- 565 Posts

I am thrilled to announce that my Destroyed by Breach research project finally has a proper website!

I still have a lot of gaps to fill and polishing to do, but now there's a way to report a company that has gone out of business due to a cybersecurity incident or breach without having to tag me on social media. The website has a form you can submit anonymously!

"Doing my first ever CFP, and...

...I'm going to do a few more before inflicting the world with my ridiculous generic advice."

...said no one ever.

It's interesting listening to people who historically criticized 'FUD', or using fear, uncertainty, or doubt to sell security tooling, uncritically talk about how the damage a Frontier AI model they've never used or seen used will be profound and that it is going to 'change everything'. This is all based on only the analysis of the vendor selling that AI model.

And also you can't see it, because it's 'too dangerous'. Only a carefully curated set of brand name partners who financially benefit from being part of this story can, for 'preparation' (with no details on what that means).

I'm not saying what will or won't happen. But isn't the brand supposed to be skepticism?

AI’s impact in security and its application are not always aligned -

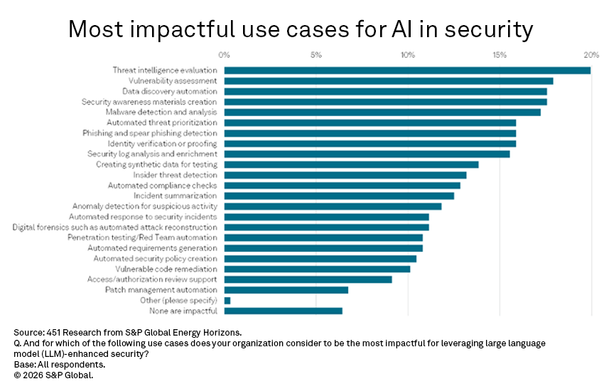

AI for security, or using large language model capabilities to improve security tooling, is permeating several organizations’ security product categories, a recurring theme since generative AI copilots emerged in 2023. When asked where teams are using LLM-enhanced security in their tooling and processes, the top responses are for malware detection and analysis (as in extended or endpoint detection and response), automated response to security incidents (such as a SecOps platform leveraging agents to automate common tasks), automated compliance checks and vulnerability assessment.

When the results are compared to a companion question asking where LLM-enhanced organizational security is having the greatest impact, the only commonality in the top four is vulnerability assessment, ranked second. Leveraging AI here may be prescient, as frontier AI models are showing aptitude for identifying security vulnerabilities. For example, Anthropic has initiated Project Glasswing in partnership with major security and technology providers, over concerns about the unreleased Claude Mythos model’s potential to identify exploitable weaknesses. Threat intelligence evaluation is number one, data discovery rounds out the top three, and creation of security awareness materials is fourth. Since OpenAI released ChatGPT in 2022, GenAI interfaces have been used for word smithing and graphics creation, key parts of creating educational materials. The commonality among the other three categories is more direct: They represent data analysis challenges, where AI holds promise in delivering context from massive data volumes.

The delta between implementation and impact raises a key question relevant to long-term security compliance issues like data discovery, which is characterized by a difficult and somewhat manual challenge: cataloging, permissioning access and protecting sensitive information. If impact lies in the messiness of data asset classification, why aren’t emerging AI capabilities more widely leveraged there? Is AI being applied to the most straightforward use cases, the most marketable ones, or the most intractable issues in organizational information security?

https://blog.451alliance.com/ais-impact-in-security-and-its-application-are-not-always-aligned/

This was the promise 25 years ago. Why do I need a half dozen streaming services now?

Qwest commercial 1999 - Every Movie

For the love of all that is holy, please before releasing your new acronym on the world, do a quick Internet search.

The preceding message is mostly meant for a certain kind of analyst.

"It's going to get much worse. Just look at generated code, right? I mean, pull requests are getting bigger. The vulnerability mix is changing. It's not going down. How do we deal with that? How do we let people safely generate code from prompts?" said Daniel Kennedy, principal research analyst at 451 Research, part of S&P Global Market Intelligence.

As a solution, he offered a brake metaphor. "A lot of people think brakes are for stopping cars. Brakes allow you to operate faster, and so this entire AI governance field that's developing is going to allow us to safely operate AI in all its forms and draw the benefits from it, and that's really what the entire show floor is about," he said."

While I’ve been in the trenches for a few decades now, my feed is not one of management advice usually.

But here’s one:

Don’t be the “let’s take this offline” person, when something is getting resolved in real time with a little passion or because you don’t like difficult questions. The ball must move forward.

It’s wildly unimpressive. It’s really bad if everyone then ignores you.

If you want to schedule something in a smaller focused group, say that, in a specific way, with timing.