A few notes about the massive hype surrounding Claude Mythos:

The old hype strategy of 'we made a thing and it's too dangerous to release' has been done since GPT-2. Anyone who still falls for it should not be trusted to have sensible opinions on any subject.

Even their public (cherry picked to look impressive) numbers for the cost per vulnerability are high. The problem with static analysis of any kind is that the false positive rates are high. Dynamic analysis can be sound but not complete, static analysis can be complete but not sound. That's the tradeoff. Coverity is free for open source projects and finds large numbers of things that might be bugs, including a lot that really are. Very few projects have the resources to triage all of these. If the money spent on Mythos had been invested in triaging the reports from existing tools, it would have done a lot more good for the ecosystem.

I recently received a 'comprehensive code audit' on one of my projects from an Anthropic user. Of the top ten bugs it reported, only one was important to fix (and should have been caught in code review, but was 15-year-old code from back when I was the only contributor and so there was no code review). Of the rest, a small number were technically bugs but were almost impossible to trigger (even deliberately). Half were false positives and two were not bugs and came with proposed 'fixes' that would have introduced performance regressions on performance-critical paths. But all of them looked plausible. And, unless you understood the environment in which the code runs and the things for which it's optimised very well, I can well imaging you'd just deploy those 'fixes' and wonder why performance was worse. Possibly Mythos is orders of magnitude better, but I doubt it.

This mirrors what we've seen with the public Mythos disclosures. One, for example, was complaining about a missing bounds check, yet every caller of the function did the bounds check and so introducing it just cost performance and didn't fix a bug. And, once again, remember that this is from the cherry-picked list that Anthropic chose to make their tool look good.

I don't doubt that LLMs can find some bugs other tools don't find, but that isn't new in the industry. Coverity, when it launched, found a lot of bugs nothing else found. When fuzzing became cheap and easy, it found a load of bugs. Valgrind and address sanitiser both caused spikes in bug discovery when they were released and deployed for the first time.

The one thing where Mythos is better than existing static analysers is that it can (if you burn enough money) generate test cases that trigger the bug. This is possible and cheaper with guided fuzzing but no one does it because burning 10% of the money that Mythos would cost is too expensive for most projects.

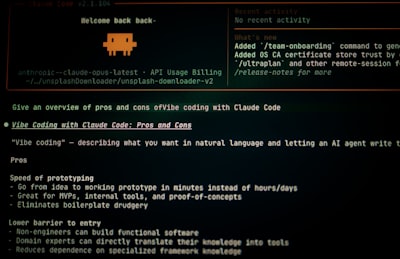

The source code for Claude Code was leaked a couple of weeks ago. It is staggeringly bad. I have never seen such low-quality code in production before. It contained things I'd have failed a first-year undergrad for writing. And, apparently, most of this is written with Claude Code itself.

But the most relevant part is that it contained three critical command-injection vulnerabilities.

These are the kind of things that static analysis should be catching. And, apparently at least one of the following is true:

- Mythos didn't catch them.

- Mythos doesn't work well enough for Anthropic to bother using it on their own code.

- Mythos did catch them but the false-positive rate is so high that no one was able to find the important bugs in the flood of useless ones.

TL;DR: If you're willing to spend half as much money Mythos costs to operate, you can probably do a lot better with existing tools.