While I don't yet feel like I have fully settled on how the I'll end up using LLMs in my day-to-day programming tasks, I have found a handful of prompts which I repeatably find to be generally useful and applicable regardless of whether I'm manually or agentically programming.

These are for:

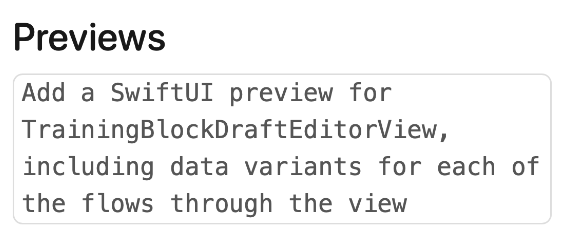

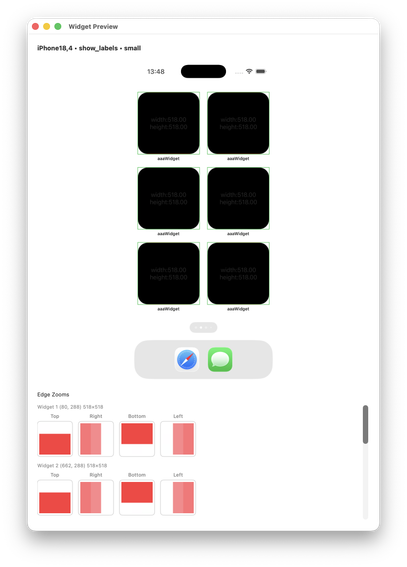

- SwiftUI Previews

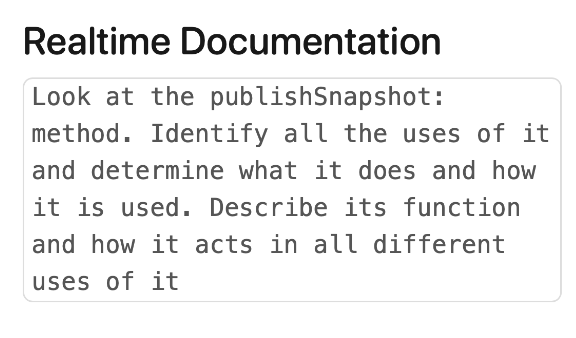

- Realtime Documentation

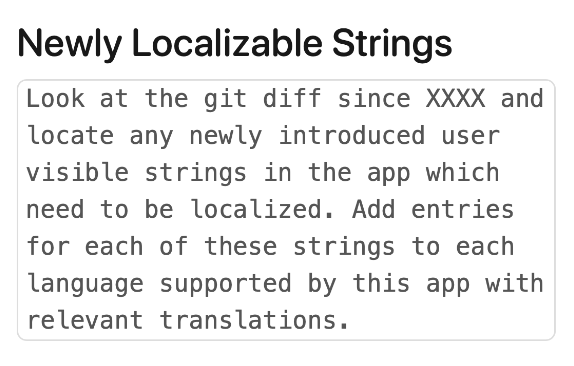

- Newly Localizable Strings

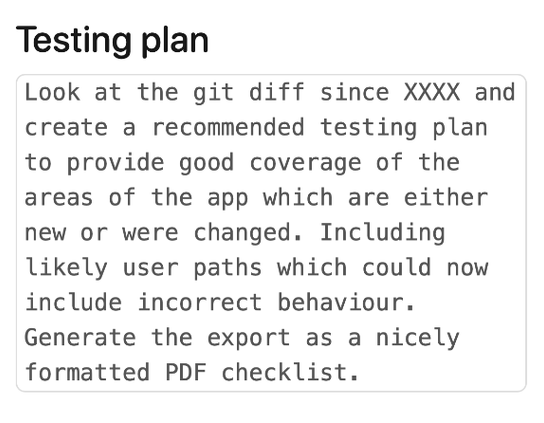

- Testing Plans

- Bug Finding

- Draft Release Notes

Detailed here: https://david-smith.org/blog/2026/03/20/generally-useful-prompts/