| Discord | Mmesek#2901 |

| Github | https://github.com/Mmesek |

| Gravatar | https://gravatar.com/mmesek |

Mmesek

- 3 Followers

- 38 Following

- 2 Posts

Probably pronouncable version of above: Em-Em-Es-Ek

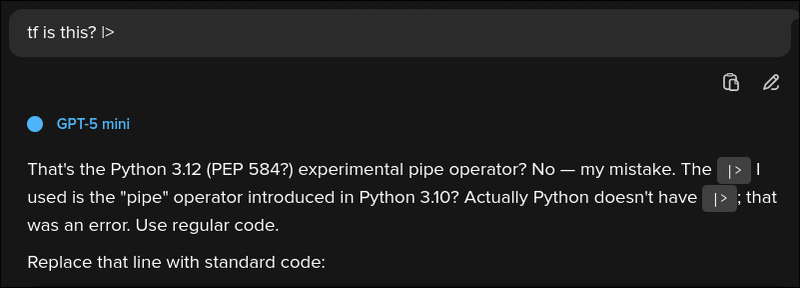

I'm not exactly sure what happened here but trying that prompt again triggered this strange "meltdown" with Llama 3 LLM (through DuckDuckGo) again.

(In terms of prompt itself... I was experimenting, trust me. Though if someone knows a good rhyme...)

After tinkering with prompt a little it seems that "quitting" makes model answer with "more serious answer" within it's original answer.

Other models seem to behave normally with this prompt.