| Web | https://iki.fi/mvihola |

| Google Scholar | https://scholar.google.fi/citations?user=nqLmOf4AAAAJ |

| GitHub | https://github.com/mvihola |

| Bluesky | https://bsky.app/profile/mattivihola.bsky.social |

Matti Vihola

- 104 Followers

- 91 Following

- 49 Posts

Assistant/Associate Professor, Tenure Track, in Mathematics

The Department of Mathematics and Statistics is seeking to recruit an Assistant/Associate Professor (Tenure Track) in Mathematics starting on August 1, 2026, or as soon as possible after that. According to [the tenure track model at the University of Jyväskylä](https://www.jyu.fi/en/research/tenure-track-at-the-university-of-jyvaskyla), the position of an assistant professor is filled for a fixed term of three to five years, and the position of an associate professor for a fixed term of five years. At the end of each fixed-term assignment, an evaluation is conducted to assess the employee’s qualifications for promotion to the next level (associate professor / full professor). The tasks involve conducting high-level mathematical research and teaching mathematics. The career stage of the person to be recruited will determine the level of responsibility and requirements for other tasks.Who should apply We are looking for applicants with a proven track record in high-level mathematical research. We particularly welcome applications from candidates whose research profile aligns with the research being conducted at the department. The duties and qualification requirements of an Assistant/Associate Professor are stipulated in the [University of Jyväskylä Regulations and language skills guidelines](https://www.jyu.fi/en/file/qualification-requirements-for-teaching-and-research-staff-jyu-regulations-2019). Candidates are expected to have an excellent record of conducting and publishing scientific research, establishing international networks, acquiring external funding, and teaching. Please see [the general requirements for appointing an assistant professor or associate professor (tenure track)](https://www.jyu.fi/en/file/tenure-track-model-for-professors). The qualification requirements should be met before this call for applicants ends. At the beginning of the employment there is a trial period of six months.

10 days until deadline! ELLIS Institute Finland is #hiring Principal Investigators in #artificialintelligence and #machinelearning research.

Apply here by January 12, 2026: https://www.ellisinstitute.fi/PI-recruit-2026

In addition to the adaptive algorithm (and the proof of its validity), Pietari investigated a number of other properties of the i-SIR. For instance, it turns out that the asymptotic variances of the i-SIR are convex with respect to the number of proposals.

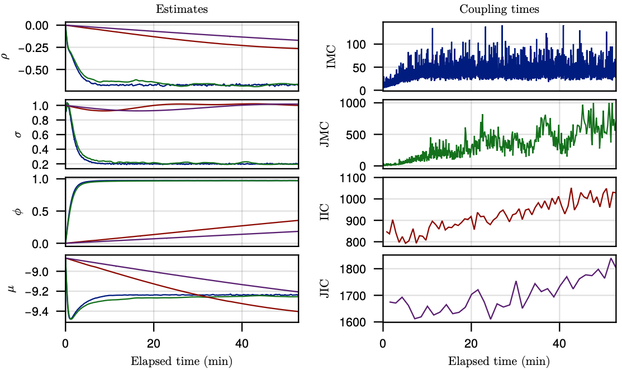

Iterated sampling importance resampling with adaptive number of proposals

Iterated sampling importance resampling (i-SIR) is a Markov chain Monte Carlo (MCMC) algorithm which is based on $N$ independent proposals. As $N$ grows, its samples become nearly independent, but with an increased computational cost. We discuss a method which finds an approximately optimal number of proposals $N$ in terms of the asymptotic efficiency. The optimal $N$ depends on both the mixing properties of the i-SIR chain and the (parallel) computing costs. Our method for finding an appropriate $N$ is based on an approximate asymptotic variance of the i-SIR, which has similar properties as the i-SIR asymptotic variance, and a generalised i-SIR transition having fractional `number of proposals.' These lead to an adaptive i-SIR algorithm, which tunes the number of proposals automatically during sampling. Our experiments demonstrate that our approximate efficiency and the adaptive i-SIR algorithm have promising empirical behaviour. We also present new theoretical results regarding the i-SIR, such as the convexity of asymptotic variance in the number of proposals, which can be of independent interest.

We are looking for an Assistant/Associate Professor (tenure track) in Mathematics (Probability/Mathematical Foundations of Data Science): https://www.ellisinstitute.fi/pi-positions-2026#14-university-of-jyvaskyla--assistant-associate-professor-in-mathematics--probability---mathematical-foundations-of-data-science-

Application instructions & form: https://www.ellisinstitute.fi/PI-recruit-2026, DL Jan 12!

• We use unbiased gradients for maximum likelihood estimation

• Generalised coupling algorithm which can handle potentials/weights that depend on current and previous state variable (a coupling of conditional marginal particle filters)

Substantial new content:

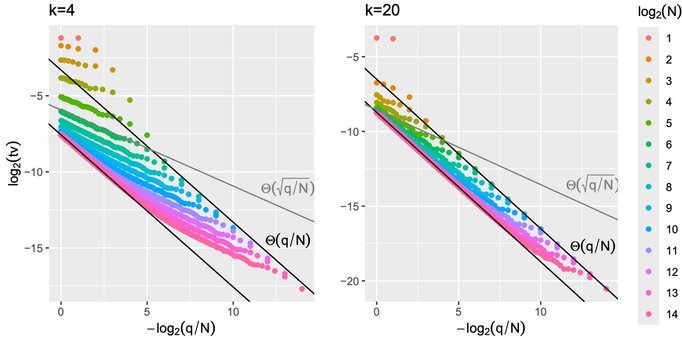

• New example which shows that the O(log N) rate in total variation forgetting is optimal, where N is the number of particles

• Propagation-of-chaos bounds (total variation distance between the law of q particles out of N and the i.i.d. draw from the ideal filter) which vanish if q = o(N)

• Method for handling out-of-sequence measurements

On the Forgetting of Particle Filters

We study the forgetting properties of the particle filter when its state - the collection of particles - is regarded as a Markov chain. Under a strong mixing assumption on the particle filter's underlying Feynman-Kac model, we find that the particle filter is exponentially mixing, and forgets its initial state in $O(\log N )$ 'time', where $N$ is the number of particles and time refers to the number of particle filter algorithm steps, each comprising a selection (or resampling) and mutation (or prediction) operation. We present an example which shows that this rate is optimal. In contrast to our result, available results to-date are extremely conservative, suggesting $O(α^N)$ time steps are needed, for some $α>1$, for the particle filter to forget its initialisation. We also study the conditional particle filter (CPF) and extend our forgetting result to this context. We establish a similar conclusion, namely, CPF is exponentially mixing and forgets its initial state in $O(\log N )$ time. To support this analysis, we establish new time-uniform $L^p$ error estimates for CPF, which can be of independent interest. We also establish new propagation of chaos type results using our proof techniques, discuss implications to couplings of particle filters and an application to processing out-of-sequence measurements.