Richard Everitt

- 380 Followers

- 308 Following

- 79 Posts

Early version on arxiv: https://arxiv.org/abs/1908.06514

Xi'an's Og: https://xianblog.wordpress.com/2019/10/24/revisiting-the-balance-heuristic/

Annealing Strategies for Variance Reduction in Balance Heuristic Estimators - Statistics and Computing

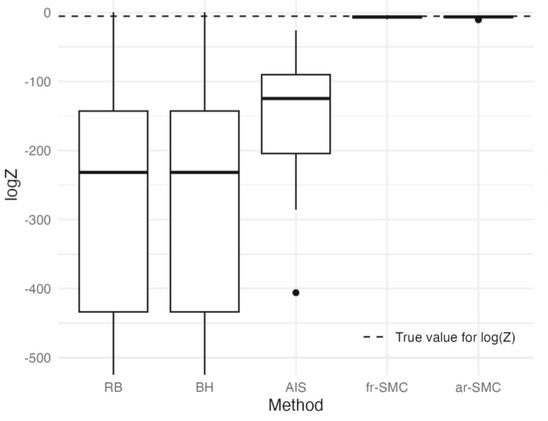

The computation of normalisation constants, or marginal likelihoods, is essential in Bayesian inference since they provide information about model adequacy and facilitate model comparison. While importance sampling offers unbiased estimates, its naive implementations often suffer from high variance in complex models. Our work makes the following contributions to multiple importance sampling methodology. First, we demonstrate that the balance heuristic estimator with stochastically selected proposals maintains statistical efficiency while reducing computational costs compared to Rao-Blackwellised alternatives. Second, we introduce a novel extended-space representation for the balance heuristic that enables the incorporation of annealing steps, which is essential for variance reduction. Third, we propose a resampling scheme tailored to this extended-space framework that mitigates the curse of dimensionality while preserving unbiasedness. We validate our framework through numerical experiments, demonstrating its efficiency and robustness in complex inference problems.

https://go.warwick.ac.uk/env_epi