Leo Ré Jorge

@LeoRJorge

- 39 Followers

- 224 Following

- 577 Posts

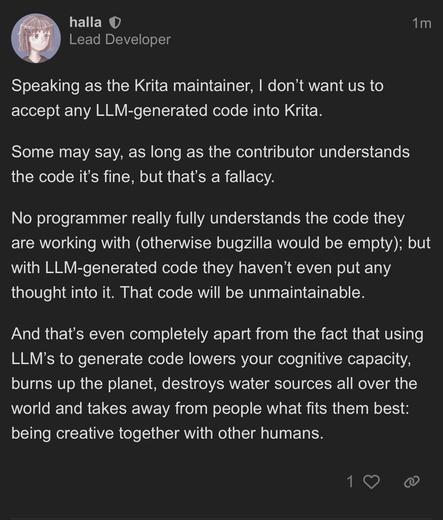

Krita’s Maintainer is awesome!

Global renewable capacity surged in 2025, with 814 GW of new solar and wind installations (more than the entire electricity capacity of the EU), the fastest expansion on record. Ember

https://ember-energy.org/latest-updates/world-adds-a-record-breaking-814-gw-of-solar-and-wind-in-2025/ #ShareGoodNewsToo

https://ember-energy.org/latest-updates/world-adds-a-record-breaking-814-gw-of-solar-and-wind-in-2025/ #ShareGoodNewsToo

#Agrivoltaics: high returns on investment.

h/t @DoomsdaysCW

"From an operations and maintenance (O&M) perspective, the study highlights that sheep are a technically superior tool for vegetation control compared to mechanical mowing.

The solar arrays, in turn, provide a beneficial microclimate for the flock as the shade from the panels reduces heat stress and water consumption, which researchers noted can lead to improved animal welfare and weight gain."

https://www.pv-magazine.com/2026/03/26/solar-sheep-grazing-delivers-margins-up-to-40-in-new-study/

It cannot be long before Trump’s retinue of sycophants, enablers and justifiers, in government and in the media, start telling us, "I never liked the guy", "I tried to restrain him" and "I was only obeying orders."

So be sure to keep the receipts.

It occurred to me this morning that it has now been over a decade in which we have all been forced to hear something about Donald Trump every fucking day - often many times a day.

There are people in journalism who have no experience of a world in which we actually covered many different topics in a day.

I feel like I've been mentally force fed McDonalds for ten years non-stop.

I would like it to be over before I die, please.

the thought "coffee is tea" entered my head unbidden and i had to make the whole alignment chart in order to banish it

Next time anyone claims they're using LLMs for "minor cleanup" or the like, send them this (from Google no less!)

"We find that even when LLMs are prompted with expert feedback and asked to only make grammar edits, they still change the text in a way that significantly alters its semantic meaning."

How LLMs Distort Our Written Language

Large language models (LLMs) are used by over a billion people globally, most often to assist with writing. In this work, we demonstrate that LLMs not only alter the voice and tone of human writing, but also consistently alter the intended meaning. First, we conduct a human user study to understand how people actually interact with LLMs when using them for writing. Our findings reveal that extensive LLM use led to a nearly 70% increase in essays that remained neutral in answering the topic question. Significantly more heavy LLM users reported that the writing was less creative and not in their voice. Next, using a dataset of human-written essays that was collected in 2021 before the widespread release of LLMs, we study how asking an LLM to revise the essay based on the human-written feedback in the dataset induces large changes in the resulting content and meaning. We find that even when LLMs are prompted with expert feedback and asked to only make grammar edits, they still change the text in a way that significantly alters its semantic meaning. We then examine LLM-generated text in the wild, specifically focusing on the 21% of AI-generated scientific peer reviews at a recent top AI conference. We find that LLM-generated reviews place significantly less weight on clarity and significance of the research, and assign scores that, on average, are a full point higher.These findings highlight a misalignment between the perceived benefit of AI use and an implicit, consistent effect on the semantics of human writing, motivating future work on how widespread AI writing will affect our cultural and scientific institutions.

A fresh problem with #AI is what might be called Artificial Gullibility.

According to a BlueSky poster, an academic who was ruled guilty of plagiarism has waged an extensive astroturfing campaign to rewrite the record. The goal was probably to game conventional search engines, but the texts have now been ingested by Google's AI. Google's "AI Overview” presents her (apparently false) version of events, backing it with the supposed authority of Google and “AI”.

1/

https://bsky.app/profile/laurenginsberg.bsky.social/post/3mhnxv2swok2g

Lauren Donovan Ginsberg (@laurenginsberg.bsky.social)

The return of ReceptioGate to the news is a useful moment to think about the role AI is having in creating truth for a lot of internet users. I posted this update - the clear plagiarism verdict against Rossi - on another platform… /1 [contains quote post or other embedded content]

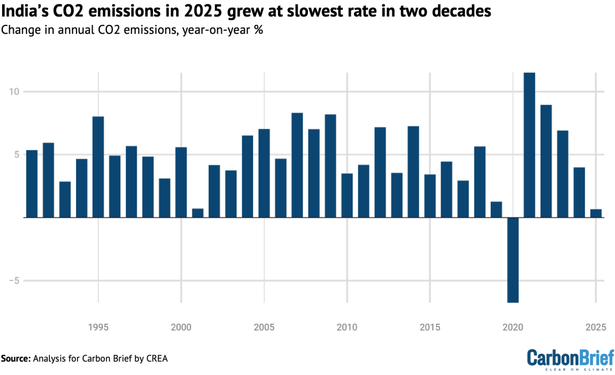

NEW ANALYSIS: India's CO2 emissions in 2025 grew at slowest rate in more than two decades

🏭power CO2 down 3.8%

☀️record clean-energy growth

🚗oil demand only up 0.4%

🏗️steel/cement up 8/10%

By CREA for CB

There's this myth that automated spam detection is hard because spammers are all very clever masters of disguise.

No. Spammers are stupid as a shoe. They have dog shit for brains.

Automated spam detection is hard because the line between spam and "legitimate" marketing activity is a fiction.