| Area | Seattle |

Knightmare

- 168 Followers

- 214 Following

- 10.9K Posts

Mayor Zohran Mamdani released a city budget that completely eliminates a $12 billion deficit left over from the Adams administration, the largest gap since the Great Recession. He accomplished it by taxing the rich, working with Gov. Hochul, and firing pricey consultants—not cutting services for working New Yorkers!

https://prospect.org/2026/05/12/mamdani-announces-balanced-budget-without-cuts/

two years ago i finally lost my mind dealing with wordpress and markdown for clients.

it forced me to write an entire website construction kit in php from scratch (kiki). it forced me to learn how to write a customizable markup language (bug). all so i would never have to touch wordpress and markdown ever again.

two years later, there are now dozens of kiki instances run by sysop-admins around the world. it's incredibly gratifying to see the web made less shitty by just elbow grease and diy learning. the instance admins are a blend of writers and coders who painstakingly find bugs, dig into the source, and inspectorgadget workarounds. improving it and patching it is a lifelong commitment, even if it doesn't pay the bills.

in the coming week is a feature that 0.01% of users will take advantage of, but i'm so happy to add it, to keep the dream of an indie web alive: kiki will now generate RSS 2.0 compliant podcast feeds. i thought regular old web RSS was bad. but RSS for podcasts is about 10x worse 😅

the attached screenshot is a new site i'm building with kiki 1.2.0, which autogenerated a podcast feed using metadata in each post.

this morning i'm updating a client's wordpress site, and for all my moaning about how bad RSS is, i can't believe how much worse wordpress is. 😆

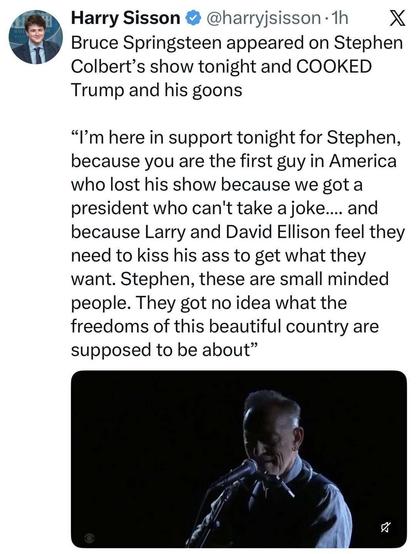

He’s still The Boss.

Link: https://xcancel.com/harryjsisson/status/2057339437781057891

(Aaron Schwartz/AFP via Getty)

🏳️🌈🖖🏽

🏳️🌈🖖🏽