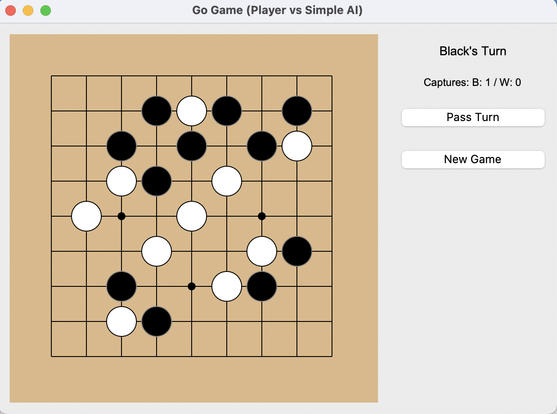

Another experiment to figure out how much leading LLMs can go in creating a new app. As Gemini had no issue generating a full Sudoku, I went for something bigger. A game of Go.

The results were impressive. As Gemini 2.5 pro experimental overall did best, I focus on it here. Claude 3.7 is just as good. ChatGPT/Deepseek clearly worse.

Gemini

- created a fully working go-playing app from a single simple prompt. Including KO rule, suicide rule, including a "make random legal move" AI.

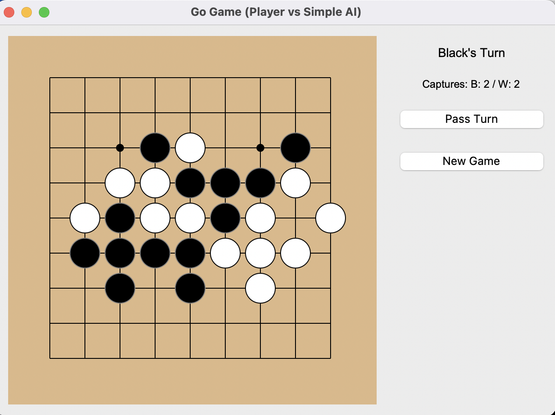

- created an AI that has a beginner level of Go understanding in 4 more simple prompts, showing deep understanding of the task at hand, how to break it down and turn the solution into code.

I'm talking about prompts like this:

"Wonderful. The AI plays great now. However, it is unable to secure areas with two eyes. How could we implement another feature that checks whether a move helps making eye shape?"

After which it will create a heuristic to penalize moves that fill eye-space and one to reward moves that create eyes.

On its way to the final version it generated a python app of 1200 lines of code. Only one line was wrong with a trivial typo-like mistake.

If you're interested, I've written in more detail Incl. screenshots how the 4 LLMs did here: https://medium.com/@karlheinz.agsteiner/developing-a-simple-go-game-with-thinking-llms-a-comparison-3b1646d35ee4