@yaxu i see people describe LLMs as algorithms quite often but i'm pretty sure that's not the right word

i suppose they can *mimic* algorithms though

@yaxu @sean_ae tbh we could describe the function of an llm (or any "ai") in algorithmic terms, it's just a stochastic and fractal algorithm... so we can't trace the exact path of input to output, but we can describe in abstract detail what happens to the input to transform it into the output....

and yes, there was a lot of optimistic (naive tbh) writing about tech from a leftist perspective over the preceding 40 years :)

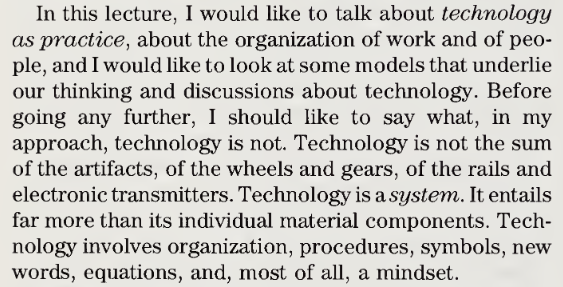

@yaxu idk - do they even qualify as technology?

they're weird, statistical models, not designed or controlled, they just kind of *are*

@sean_ae @yaxu they did that... it was the first deep dream ... (or maybe that's the reference you're making and i'm just missing it lol)

which is a great example, because we know exactly what happens when you model images of dogs and can absolutely describe the process in detail without any black-boxes or "nature" ...

@yaxu idk if you can say designed or controlled really, maybe slightly

you could maybe say built

it's not really a set of instructions, more a set of weights (most of which are not being set intentionally unless you only include human-generated data and even that would be a bit of a stretch imo)

not often i end up in a semantic hole, pls forgive me

@sean_ae @yaxu yes. what is it modeling? what relations between data are important? what does “training” mean in detail? those are all choices that change what is being modeled.

models are a human invention… the dictionary is also an llm, it models the alphabetical relation between words and associates them with definitions …

@sean_ae i.e, if you wanted an alphabetical model that system can't do it... the algorithm for "training a model" as it's commonly used these days is a specific type of modelling... it's not objective or representing any "natural" state of the data. it's using the data to produce a very specific representation.

@emenel @yaxu the network is the thing you are training, the model is a result of training that network

i suppose what you are saying is that since networks are designed, some of that design is affecting the quality of the model (and it is, but i would not call the model designed - otherwise they would actually work, and they frequently don't)

@sean_ae tbh it’s not the quality, it’s the ontology. what is being modeled and what does the model represent? there’s no neutral or objective answer. someone (group, org, corp, etc) designed a system that models a specific kind of data in a specific way.

any and all modeling is designed…

i supsect the word "network" gets in the way a bit here. we use it in a lot of contexts. so would it be better to use a more precise term like "graph" (mathematical kind: nodes and edges) so that you can then begin to get to the next set of clarifying questions? for example, "is this a directed graph (do the edges point in one direction only)?"

also, a graph is the fundamental structure of a "neural network", but can also be both a) the structure of the input data, and b) the structure of the output data.

then if we think of it in these terms, we can start to deal with the other generic, overloaded term, the "model." is it correct to interpret an LLM "model" as the (non-neutral, designed) set of decisions or instructions for how to *traverse* the output graph produced by the LLM?

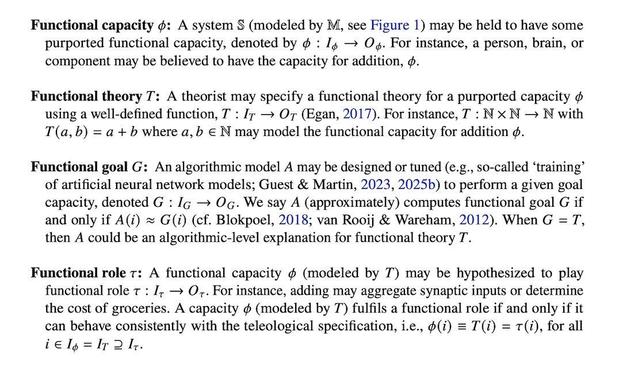

@yaxu @sean_ae @toxi re: specifically this idea of blackboxes and comprehensibility specifically: https://scholar.social/@olivia/116543457466890160

(not suggesting to reopen the conversation :), just thought this my be of interest)

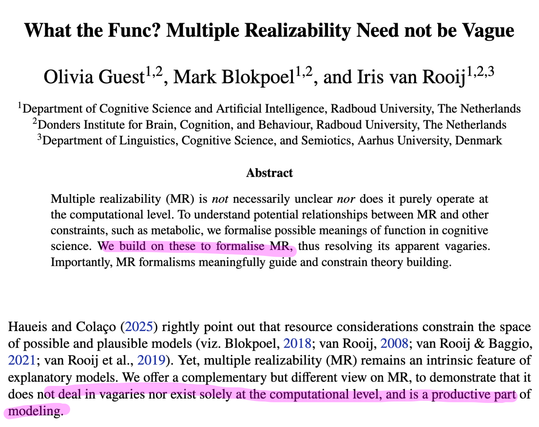

@sean_ae @emenel @yaxu funnily enough I think this is also relevant (notice where we talk about approximation)

Olivia Guest · Ολίβια Γκεστ (@[email protected])

Attached: 2 images I think ~4 years ago, Iris and I 1st sat down and formalised various meanings of function and multiple realizability — both core concepts for any serious computationalist discussions — because in part we realised nobody has done this and/or collected these for cogsci. https://doi.org/10.5281/zenodo.19388964 @Iris thread here for more: https://scholar.social/@Iris/116359421483392573 1/

@sean_ae @emenel @yaxu it's a really short pdf so probably easier to read in your pdf application, but here's a screenshot and sorry myself for not being clear!

Guest, O., Blokpoel, M., & van Rooij, I. (2026). What the func? Multiple Realizability need not be Vague. Zenodo. https://doi.org/10.5281/zenodo.19388964

Olivia Guest, Mark Blokpoel & Iris van Rooij, What the Func? Multiple Realizability Need not be Vague - PhilPapers

Multiple realizability (MR) is not necessarily unclear nor does it purely operate at the computational level. To understand potential relationships between MR and other constraints, such as metabolic, we formalise possible ...

@olivia @sean_ae @emenel @yaxu Between us, if its functional goal is to predict the most probably next word then it is not even able to do that for human language. This is funny to me, because not only is next word prediction not the same as "reasoning, learning, cognition, etc.", but even next word prediction is intractable if one really wants the most probably one. But no-one seems to realise (or care).

https://link.springer.com/article/10.1007/s42113-024-00217-5

Reclaiming AI as a Theoretical Tool for Cognitive Science - Computational Brain & Behavior

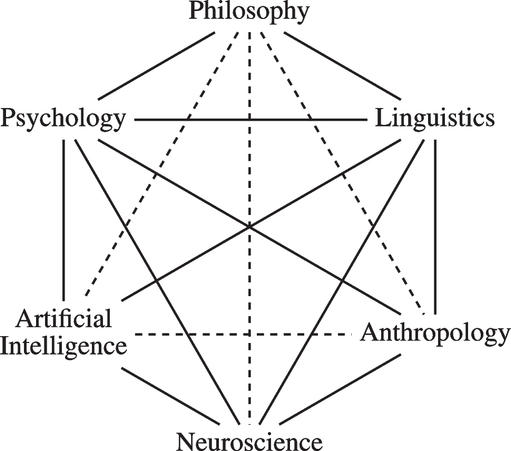

The idea that human cognition is, or can be understood as, a form of computation is a useful conceptual tool for cognitive science. It was a foundational assumption during the birth of cognitive science as a multidisciplinary field, with Artificial Intelligence (AI) as one of its contributing fields. One conception of AI in this context is as a provider of computational tools (frameworks, concepts, formalisms, models, proofs, simulations, etc.) that support theory building in cognitive science. The contemporary field of AI, however, has taken the theoretical possibility of explaining human cognition as a form of computation to imply the practical feasibility of realising human(-like or -level) cognition in factual computational systems, and the field frames this realisation as a short-term inevitability. Yet, as we formally prove herein, creating systems with human(-like or -level) cognition is intrinsically computationally intractable. This means that any factual AI systems created in the short-run are at best decoys. When we think these systems capture something deep about ourselves and our thinking, we induce distorted and impoverished images of ourselves and our cognition. In other words, AI in current practice is deteriorating our theoretical understanding of cognition rather than advancing and enhancing it. The situation could be remediated by releasing the grip of the currently dominant view on AI and by returning to the idea of AI as a theoretical tool for cognitive science. In reclaiming this older idea of AI, however, it is important not to repeat conceptual mistakes of the past (and present) that brought us to where we are today.

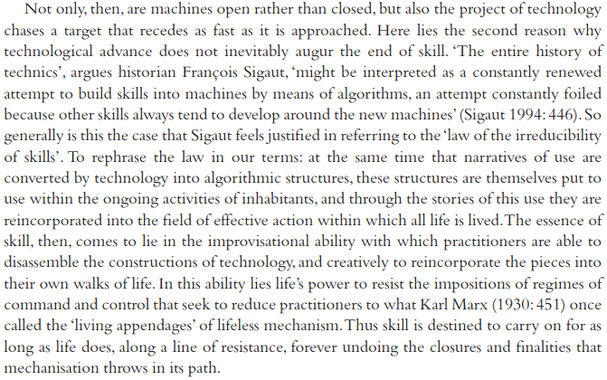

@yaxu this does read optimistic, yeah. even as specific skills are lost, to shovels or to spellcheck or to LLMs writing summarizations of complex texts, etc., the general human (or perhaps, most forms of life?) tendency to try and create new specific skills either around or utilizing the new mechanizations/tools will surely continue.

idk that that worst case Marx bit is really possible in the doom-y interpretation of it, in part because of that drive to create new specific skills.

cool share!

@yaxu i wouldn't read that as optimistic, as he certainly agrees with some skills being lost. the sigaut quote especially shows this, some skills are sucessfully "automated", in the sense that they tend to do what people have done before well enough that corporations can make more profit with the machines rather than with people. but "along a line of resistance" other skills are developed.

re: open vs closed, i'd assume he's talking not about open-source or "malleability" or anything of the like. he's quoting gilbert simondon before this paragraph, who also wrote a lot about the milieu or environments of technics. so in that context open would imo mean something like "interacting with the real world". so the circular saw from the prev paragraph, that is supposed to be a closed machine, sawing wood perfectly the same time every time, is actually open, because the wood that it saws is "imperfect" and has variable density etc.

similarily LLMs are used by real people, run on physical hardware with electricity and water from real sources, etc. a closed technology would be one that can perfectly enclose all parameters of that skill which it aims to automate, which is just something that no machine can ever achieve, certainly not LLMs. i suppose the "imperfections" would in this case be the differences and subleties in human language/writing, the idea to model "reasoning" as a stochastic process in general, etc.

to come back to the skills question again, it would be too early to say what skills people are developing through use of LLMs. that there is some de-skilling taking place is undeniable, the question is just what skills will take those places.