@yaxu i see people describe LLMs as algorithms quite often but i'm pretty sure that's not the right word

i suppose they can *mimic* algorithms though

@yaxu @sean_ae @toxi re: specifically this idea of blackboxes and comprehensibility specifically: https://scholar.social/@olivia/116543457466890160

(not suggesting to reopen the conversation :), just thought this my be of interest)

@sean_ae @emenel @yaxu funnily enough I think this is also relevant (notice where we talk about approximation)

Olivia Guest · Ολίβια Γκεστ (@[email protected])

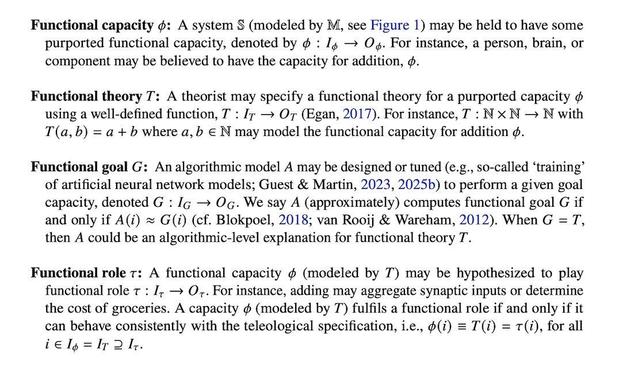

Attached: 2 images I think ~4 years ago, Iris and I 1st sat down and formalised various meanings of function and multiple realizability — both core concepts for any serious computationalist discussions — because in part we realised nobody has done this and/or collected these for cogsci. https://doi.org/10.5281/zenodo.19388964 @Iris thread here for more: https://scholar.social/@Iris/116359421483392573 1/

@sean_ae @emenel @yaxu it's a really short pdf so probably easier to read in your pdf application, but here's a screenshot and sorry myself for not being clear!

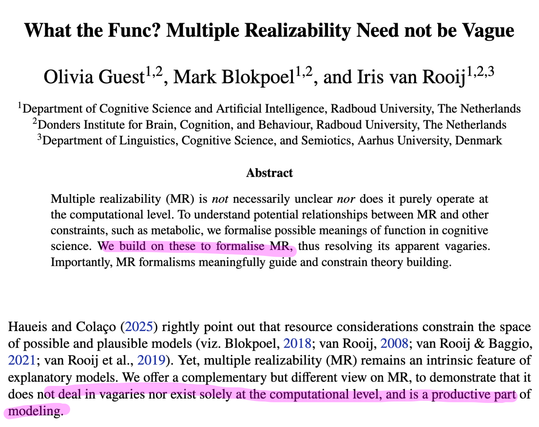

Guest, O., Blokpoel, M., & van Rooij, I. (2026). What the func? Multiple Realizability need not be Vague. Zenodo. https://doi.org/10.5281/zenodo.19388964

Olivia Guest, Mark Blokpoel & Iris van Rooij, What the Func? Multiple Realizability Need not be Vague - PhilPapers

Multiple realizability (MR) is not necessarily unclear nor does it purely operate at the computational level. To understand potential relationships between MR and other constraints, such as metabolic, we formalise possible ...

@olivia @sean_ae @emenel @yaxu Between us, if its functional goal is to predict the most probably next word then it is not even able to do that for human language. This is funny to me, because not only is next word prediction not the same as "reasoning, learning, cognition, etc.", but even next word prediction is intractable if one really wants the most probably one. But no-one seems to realise (or care).

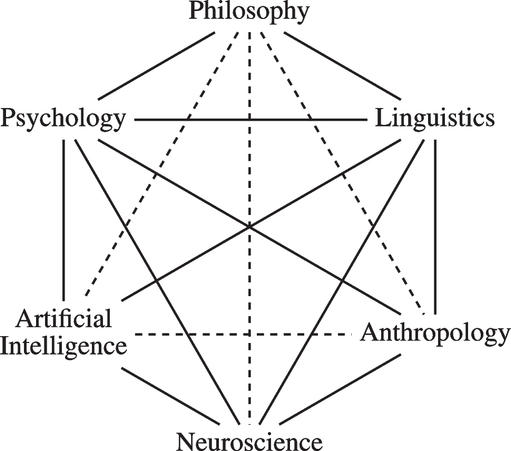

https://link.springer.com/article/10.1007/s42113-024-00217-5

Reclaiming AI as a Theoretical Tool for Cognitive Science - Computational Brain & Behavior

The idea that human cognition is, or can be understood as, a form of computation is a useful conceptual tool for cognitive science. It was a foundational assumption during the birth of cognitive science as a multidisciplinary field, with Artificial Intelligence (AI) as one of its contributing fields. One conception of AI in this context is as a provider of computational tools (frameworks, concepts, formalisms, models, proofs, simulations, etc.) that support theory building in cognitive science. The contemporary field of AI, however, has taken the theoretical possibility of explaining human cognition as a form of computation to imply the practical feasibility of realising human(-like or -level) cognition in factual computational systems, and the field frames this realisation as a short-term inevitability. Yet, as we formally prove herein, creating systems with human(-like or -level) cognition is intrinsically computationally intractable. This means that any factual AI systems created in the short-run are at best decoys. When we think these systems capture something deep about ourselves and our thinking, we induce distorted and impoverished images of ourselves and our cognition. In other words, AI in current practice is deteriorating our theoretical understanding of cognition rather than advancing and enhancing it. The situation could be remediated by releasing the grip of the currently dominant view on AI and by returning to the idea of AI as a theoretical tool for cognitive science. In reclaiming this older idea of AI, however, it is important not to repeat conceptual mistakes of the past (and present) that brought us to where we are today.