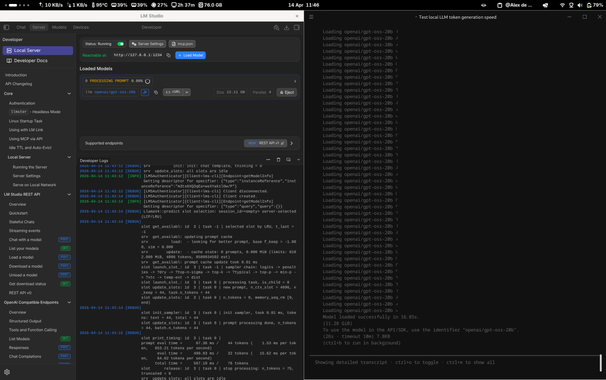

Follow-up on running #LLM locally: I benchmarked 4 models to see if I can actually work while they run

Previews toot: https://framapiaf.org/@lexoyo/116382060378966328

Good news: 3-7B models feel smooth, my laptop stays usable. The GPU handles most of the load.

The 20B model takes 4s before the first word — painful.

Sweet spot on my config: Lucie 7B, fast enough (19 tok/s) and good French.

Surprise: my system already swaps 2GB at idle — that's Firefox, not the AI 😅