@davidgerard I think tools vs genAI also muddies the waters.

Like I use AI tools - noise reduction is one where a) the wind doesn't need royalties and b) it isn't being ripped off and c) genuinely useful. My hiking videos rely on it, cos however well mic'd you are, wind is gonna wind.

But genAI, that's when I can't see many or any good uses for it. My work - writing and artwork - has been used in models - the LAION5B scraped my artwork (yes I opted out of the ones I found) and Anthropic trained off a book I contributed to. That's shitty...

And for a while I was 'well I'll get my lack of money's worth' and tried using the free versions to create stuff, but it was never that good without a lot of work...the 'presets' ripped off living artists, which I avoided as much as possible, using dead artists for inspiration. But still, not impressed.

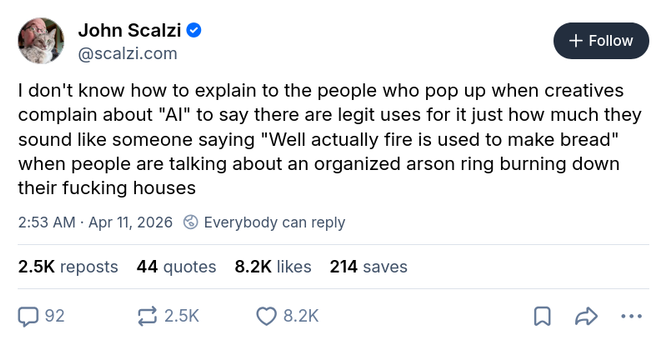

So now I am of the view genAI - NOPE, AI tools - can be OK if not exploitative.

There needs to be a lot more transparency though re: training, might need legislation.