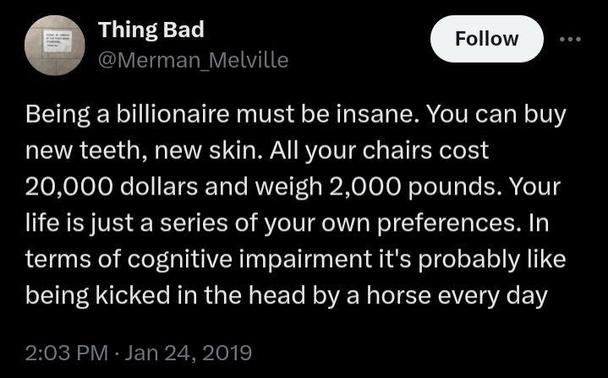

Anyway the real potential harm of #LLM is yeah this cognitive offloading, the constant danger of getting glazed on your possibly-wrong opinions until your brain turns into paste. In short, the danger of LLMs is that it will turn us all into the mental equivalent of rich people.

maybe the best defence against #LLM brain poisoning is having crushingly low self-esteem

@adr I doubt it. Imagine having crushingly low self esteem and coming across the worst sycophant that tells you how great you are and how right you are in every other sentence. Who are you to deny that it might just be right?

@chantaryu2 I mean fair, yeah. I was just thinking the kind of low self esteem where you don't believe it if anyone says anything good about you.

@adr the thing about LLMs is they are unrelenting.

@chantaryu2 Eh. Mine aren't, but mine are little dum-dums that I run on horribly underpowered machines (I refuse to use cloud models for.... some sort of principle) so while they might actually be unrelenting in a sense they are unrelenting on a time schedule that is ... not quick. A Cask of Amontillado situation.

@adr wait...even your AI has low self esteem?

@chantaryu2 nah, just sloooooooow

@adr Maybe less of an issue for those of us who remember a time before LLMs, but I do worry about the next generation(s). Sort of like the generation of kids in their late teens/early 20s who don't recall a time before the global media and political landscape was dominated by TFG.

@mmccollow 100%. oof

@adr I really wonder if this is one of the ulterior motives of the LLM push.