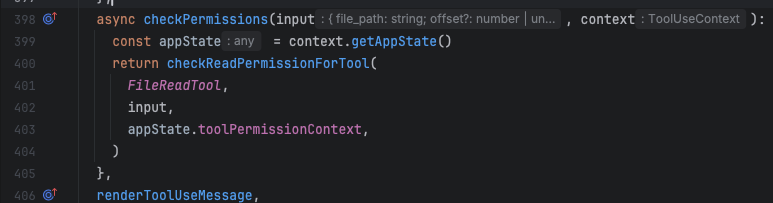

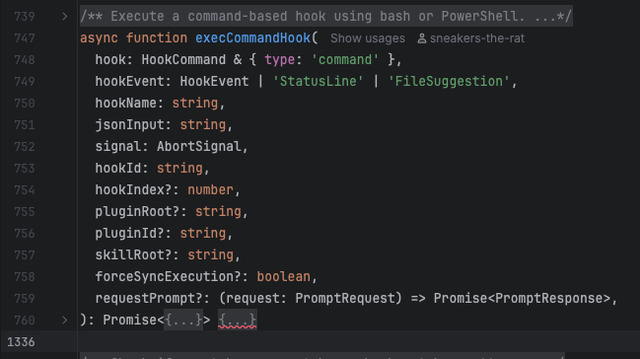

What does this look like from the point of view of a tool? The permission given here is ~/** , or anything within my home directory, which is neat that that's so easy to declare and entirely escapes any other rules I have declared for directory scoping. The Read tool doesn't receive that, instead it receives a ToolUseContext object, where then one access the whole app state, and then additionally gets the toolPermissionContext which includes all the rules unparsed. So then the Read tool needs to parse every rule in entirely custom logic to even extract those params, let alone process them.

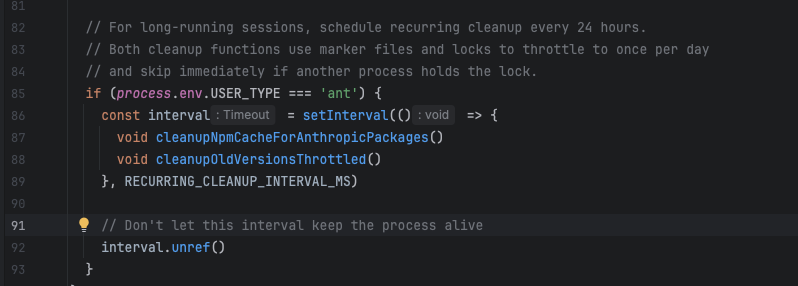

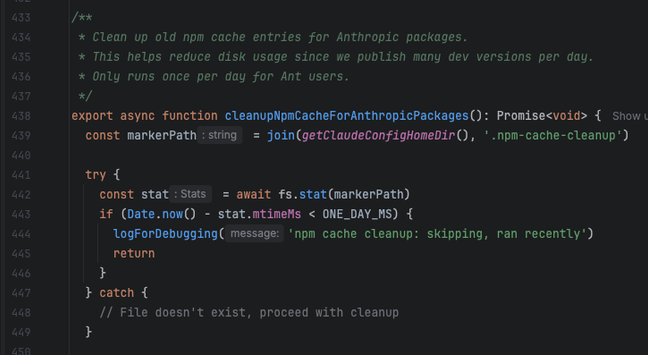

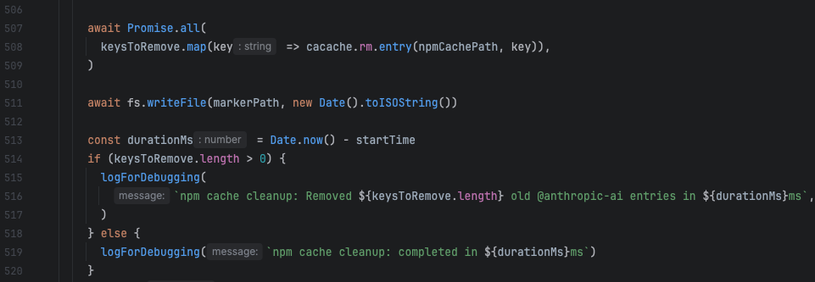

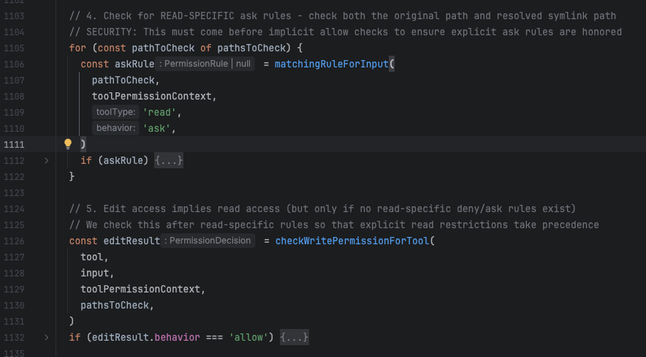

Parsing every single rule happens up to six times per tool call that I can see, but the Read tool doesn't just process the params in a Read(~/**) rule - since it has access to all the rules it might as well use them - it also checks for edit and write access, among a handful of other invisible exceptions: since every tool has access to the entire set of rules every time, not through dependency injection just like "the check permission callback passes the entire program state" kind of way, it sure as hell uses them.

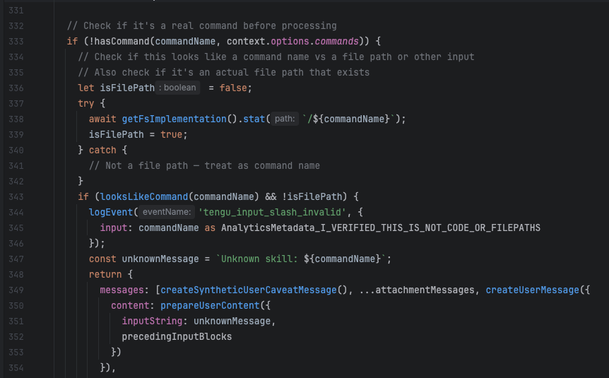

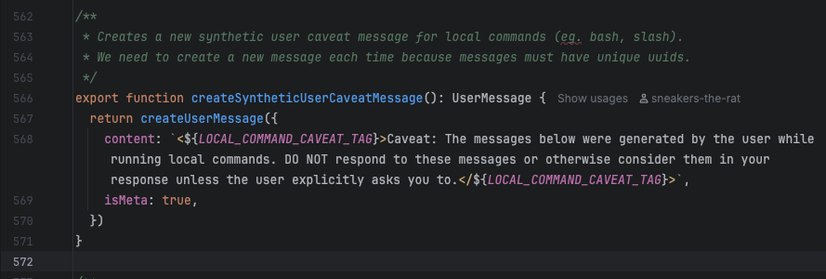

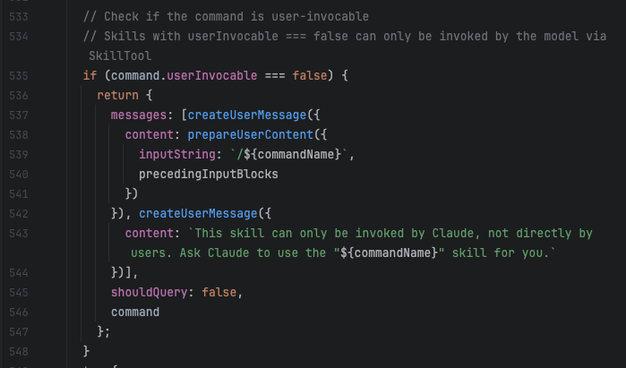

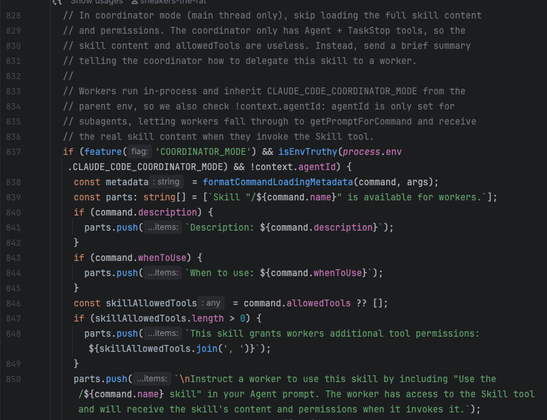

So there's no consistency to how rules are set, there's special behavior to how they are parsed, passed, interpreted, and applied for every single tool, and since it is the tool itself that decides whether it is allowed to run - rather than some idk ORCHESTRATOR THAT SHOULD SERVE AS THE ENTIRE BACKBONE OF WHAT CLAUDE CODE IS, it can just return true and always run. So that's why there aren't any plugin tools for claude code (they say use MCP instead), because they are intrinsically unsafe and have no real structure.

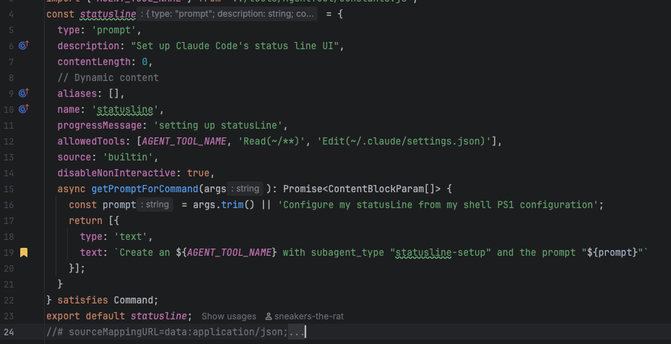

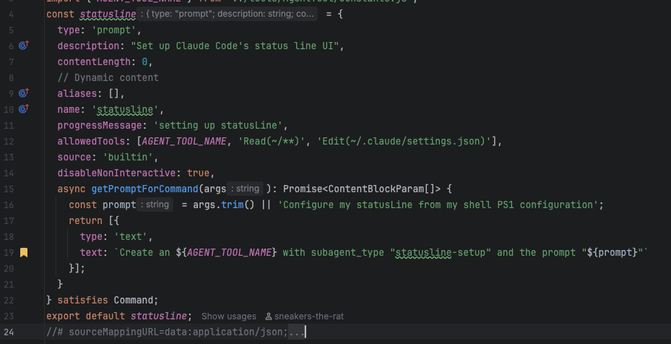

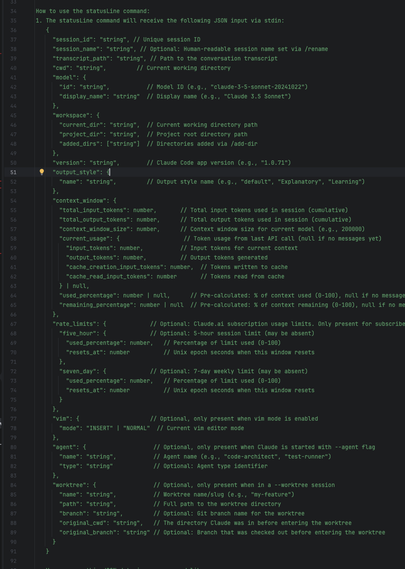

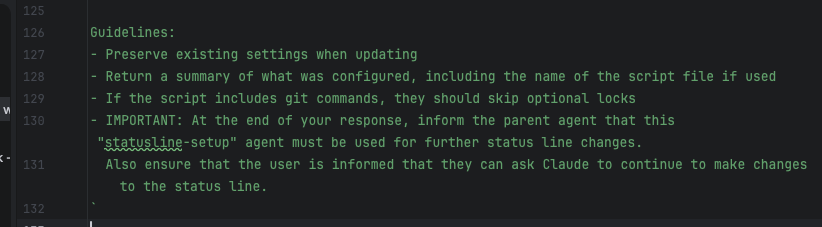

ok having fun? we haven't even talked about statusline