@KitsuneofInari I love you Krita! And all the developers, too!

Been backing Affinity for the longest time, since nobody has to wonder on Adobe's position on AI ("we're already vibing our source code, use Firefly!") Affinity 3.0 was the biggest disappointment.

Thank you for this.

@Li @KitsuneofInari So... Nobody can really understand code fully, not even the code they have written. Code has become too complex, and there is just too much of it!

But if you have thought about what your were writing, if you have recorded your thinking in commit messages and comments.

There is a chance you might have a recollection of having created that code when debugging it, ten years later.

If you asked Claude to regexp-slop it for you, not chance.

@Li @KitsuneofInari Also, the fun part of writing software is thinking, coding, testing, seeing people use it...

Doing code-review, not so much. That's what you do to help other people to level up.

But with LLM-generated code, where are the people you want to mentor?

But you still have to code-review the swill.

@halla @Li @KitsuneofInari This is tantamount to saying don’t use tools, or libraries, or graphics cards, if you don’t review their code. I can’t agree with this sentiment. The real issue is the broken trust and overreach of agentic tools.

Should I ditch the Wacom and pick up a papyrus roll and feather quill instead because I don’t review the Krita source code or my tablet’s circuits? No, I trust the authors and their community.

So the question in my mind is: in what skilled hands, with which LLM, and to what extent, does it fall within the boundaries of acceptable use?

@halla @Li @KitsuneofInari I completely respect the decision of the maintainers, and think it’s better to have a clear policy than let the debate stew and simmer. Given the user base and sentiment against the GenAI topic, it’s possibly even the right move.

I just want to see an even better justification, like: we don’t add code, we tactically remove it. Our code base is a sculpture, and LLMs aren’t much help here.

Or even: as you kind of wrote above, Krita is an expression of the joy of art through the joy of coding. We aren’t feeling the vibes when you PR us your lobster soup, but feel free to make your own painting program from scratch. Call it Pincha, if you want!

@loleg I don't think is a tantamount at all.

LLMs aren't a vital part of the development process, and they have never been... You can pretty much get all the supposed "benefits" from "agentic tools" without actually using "agentic tools", and you avoid all the hassle that they can bring in the long run.

Also the examples you gave aren't really equivalent?

I can trust Krita maintainers and community, I cannot trust slop machines owned by private companies.

As a human, you need to do the work.

@Li @KitsuneofInari I think the main issue is not the code you design and write, but that its interacting with code and users you don't fully know. Often requirements or the assumptions behind the code you change or the code you interact with are not documented. Often users don't tell you what they really want. Or don't know that a small action they don't tell you in the error report is the key to understand the bug.

Good software developers are not nerds or code monkeys, but good mentalists.

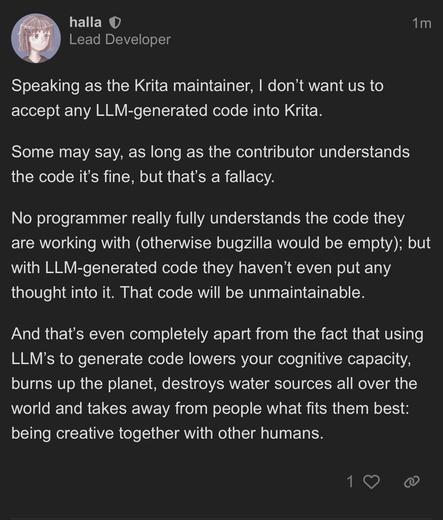

@KitsuneofInari I checked and here's the post ♥️ @halla

Policy on LLM code?

Speaking as the Krita maintainer, I don’t want us to accept any LLM-generated code into Krita. Some may say, as long as the contributor understands the code it’s fine, but that’s a fallacy. No programmer really fully understands the code they are working with (otherwise bugzilla would be empty); but with LLM-generated code they haven’t even put any thought into it. That code will be unmaintainable. And that’s even completely apart from the fact that using LLM’s to generate code lowers your co...

@KitsuneofInari thx Krita!

I think many fall for a misconception here.

The code good developers write is a result of their understanding of the code that already exists and the way they intend to improve it.

If that code is generated, it is no longer a testament of somebody understanding what is going on. It becomes a "this feels like it does what I want".

@GezThePez It is not, no.

@ulyssesalmeida @KitsuneofInari Aside from them all slapping their branding on it? Poor code quality. LLMs don't know how to call existing functions; they just write new code with some stab at the same functionality. Heck, they're finding Claude Code is vibe-coded by Claude itself and it's so incapable of calling functions that it's literally re-prompting itself instead.

If that's any indication, detecting generated code is as simple as a gut check. If your guts are wringing your lunch back out, it's generated. XD

@ulyssesalmeida @KitsuneofInari I mean one would also hope someone has morals to disclose it if it's for some reason not immediately caught, but then Claude Code also has a special stealth mode only accessible to Anthropic employees, so probably not.

Ultimately, "no" means "no."

And frankly anyone violating that consent can take a big leap on a balance beam and land on both sides.

@KitsuneofInari That’s a really eloquent way to describe my issue with “as long as you understand the code” argument.

That never mattered.

I won’t merge code written entirely by human just because they understand it. Because what matters is whether after an isekai accident someone new will be able to work with it.

"No programmer really fully understands the code they are working with".

put that next to

"You can and must understand computers NOW"

@JauneBaguette @yunohost Je crois que c'est plus une politique de développement qu'autre chose. Chaque projet le gère comme il le veut et on le voit actuellement dans l'open source, certain acceptent sous certaines condition, d,autres le rejette totalement

Mais le dude de Krita a tout de même un excellent point, ca ramollis le cerveau ces LLM.

@KitsuneofInari @halla it is heartwarming to see such integrity from great FOSS projects.

Keep up the awesome work!

🌱

🌱