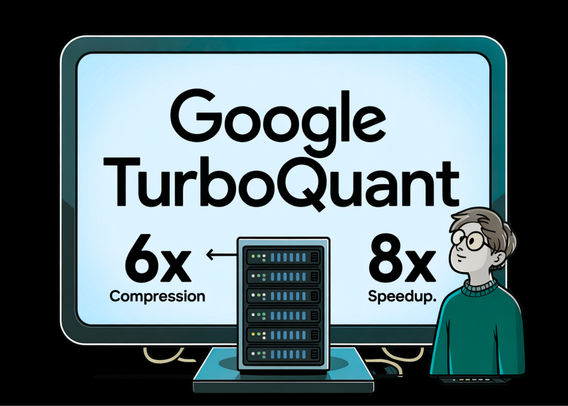

Google researchers have introduced TurboQuant, a compression algorithm that reduces LLM key-value cache memory by 6x while delivering up to 8x speedup without any accuracy loss. The data-oblivious approach works without dataset-specific tuning and is designed for GPU compatibility. https://www.marktechpost.com/2026/03/25/google-introduces-turboquant-a-new-compression-algorithm-that-reduces-llm-key-value-cache-memory-by-6x-and-delivers-up-to-8x-speedup-all-with-zero-accuracy-loss/ #AIagent #AI #GenAI #AIInfrastructure #Google

Google Introduces TurboQuant: A New Compression Algorithm that Reduces LLM Key-Value Cache Memory by 6x and Delivers Up to 8x Speedup, All with Zero Accuracy Loss

TurboQuant: A Compression Algorithm that Reduces LLM Key-Value Cache Memory by 6x and Delivers Up to 8x Speedup, All with Zero Accuracy Loss