@bms48 @mcc @trashheap I think that's a mischaracterization of what's being said here. I believe there are two main points of opposition (if I may, and I hope *I'm* not mischaracterizing in this summary):

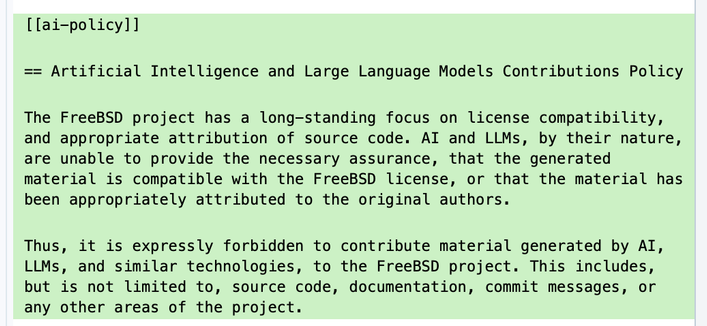

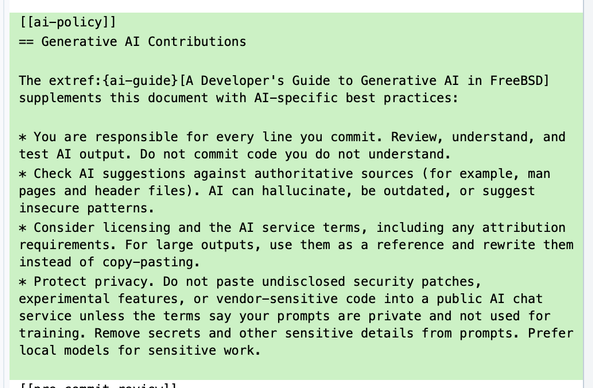

1. No amount of risk associated with generative AI/LLM contributions is acceptable (where risk includes potential copyright or license issues as well as the risk of introduced bugs or vulnerabilities);

2. Merely participating in the project shouldn't incur more LLM usage.

To the first point, the legal framework in the UK is only material for users in the UK; US courts haven't yet established enough precedent on whether machine generated code is even copyrightable. The EU also seems to be leaning towards "uncopyrightable," but also hasn't fully established rules. The more those contributions are accepted now, before a legal framework is fully established, the greater the risk not just assumed by developers but pushed out towards users who may not have the same appetite for that risk. And that doesn't even account for the bugs. Or the new bugs the LLMs produce when they're told to fix the old ones.

To the second point, the current stance of a nonzero number of developers is that the barn door, having been forced open, shouldn't be allowed to close again, and they like to call people luddites if they are worried about the conditions under which new horses are to be introduced. I don't know why the people who don't care about moral or legal issues with LLM usage are the only people whose opinion seems to matter.