A few thoughts on Astral / OpenAI, now that the emotions have sat for a bit.

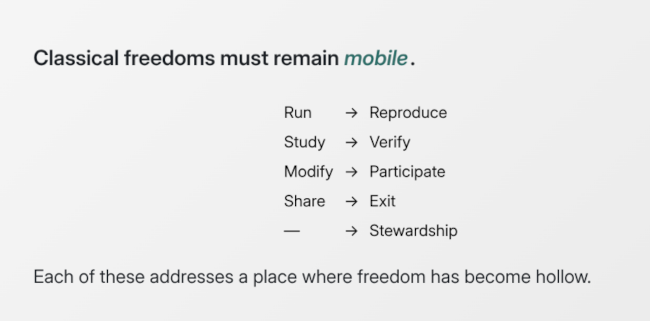

First, let me start by noting that AI is an attack on open source, inherently, by necessity, and at a structural level. That argument is bigger than Astral, but the short version is that you cannot simultaneously expand the public commons and work towards it's enclosure; moreover, if the public commons do not stand for the public good, then it's not really a commons any more.