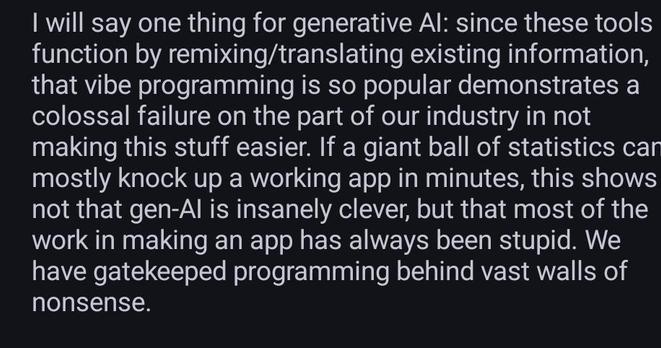

@TheOneDoc I feel it's not as if most people can't code, they can do it just fine. It's just that most people, in the pursuit of writing code and earning money, don't assign any thought or importance to things like the politics behind free software, the issues surrounding copyright laws, and how code gives an individual immense power and freedom that can rival the impact a corporation may have.

One of my major sources of inspiration for choosing computer science and being interested in free software was the documentary TPB AFK and The Internet's Own Boy: The Story of Aaron Swartz, not money or lines of code or cool software features or "blazing fast" performance or memory safety.

One of the (flawed) arguments I've read from people who cheer for LLMs is that they "democratize" access to knowledge and "liberate" it from the shackles of copyright.

It's unsurprising to see that people don't realise or care where these LLMs get their data from (by DDoSing websites) but it is surprising to see them not realise who ultimately controls these models and software and the training data behind it. Claude can't "democratize" knowledge because it's limited by the amount of tokens one has access to and the same goes for all the other LLMs. The access may be generous and subsidized at present because of a desire to make people dependent on it but it won't be like that way forever. I mean, we have seen this pattern before with streaming platforms as well but somehow the temptation in this case is too strong to ignore the wisdom people may have gained in the past? It's baffling.

The argument that LLMs liberate knowledge from copyright restrictions is hilarious. Apparently, the actions of corporations and firms who lobby for copyright and DRM isn't enough to make people realise that copyright can always be used by those in power to oppress individuals when it suits them, even at present. I mean, good luck defending oneself in court if a corporation decides that your LLM generated software infringes on their copyright. However, individuals never had and never will wield such power. All LLMs do is pit individuals against each other and give corporations even more power to violate copyright when it suits them and harass people when it doesn't.

@raulinbonn @liw