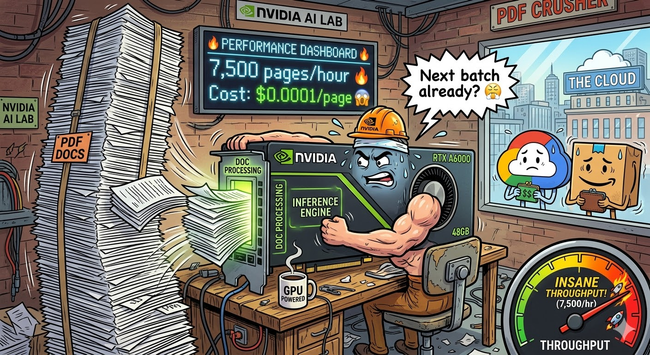

🚀 Running #OCR at scale with a #Vision #LLM for $0.49/hour

Just deployed dots.ocr (3B parameter Vision LLM by RedNote) on a single #RTX A6000 (48GB VRAM) via #RunPod. The results are great:

https://github.com/rednote-hilab/dots.ocr

📄 The Setup

- Upload any #PDF → server converts each page to an image (PyMuPDF)

- Images are sent in parallel to #vLLM (continuous batching)

- The Vision LLM reads each page and returns clean Markdown

🧵 👇