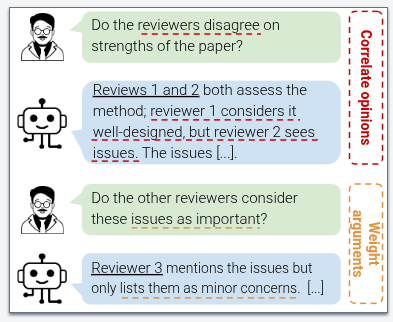

🔥 𝗠𝗲𝘁𝗮-𝗿𝗲𝘃𝗶𝗲𝘄𝗶𝗻𝗴 𝗶𝘀 𝗺𝗼𝗿𝗲 𝘁𝗵𝗮𝗻 𝗮 𝘀𝘂𝗺𝗺𝗮𝗿𝗶𝘇𝗮𝘁𝗶𝗼𝗻 - 𝗶𝘁’𝘀 𝗱𝗲𝗰𝗶𝘀𝗶𝗼𝗻-𝗺𝗮𝗸𝗶𝗻𝗴.

In our new paper, “𝗗𝗲𝗰𝗶𝘀𝗶𝗼𝗻-𝗠𝗮𝗸𝗶𝗻𝗴 𝘄𝗶𝘁𝗵 𝗗𝗲𝗹𝗶𝗯𝗲𝗿𝗮𝘁𝗶𝗼𝗻: 𝗠𝗲𝘁𝗮-𝗿𝗲𝘃𝗶𝗲𝘄𝗶𝗻𝗴 𝗮𝘀 𝗮 𝗗𝗼𝗰𝘂𝗺𝗲𝗻𝘁-𝗴𝗿𝗼𝘂𝗻𝗱𝗲𝗱 𝗗𝗶𝗮𝗹𝗼𝗴𝘂𝗲”, we ask how AI can support meta-reviewers 𝗶𝗻 𝘁𝗵𝗲 𝗱𝗲𝗰𝗶𝘀𝗶𝗼𝗻 𝗽𝗿𝗼𝗰𝗲𝘀𝘀 — 𝗻𝗼𝘁 𝗷𝘂𝘀𝘁 𝗶𝗻 𝘄𝗿𝗶𝘁𝗶𝗻𝗴 𝘁𝗵𝗲 𝗳𝗶𝗻𝗮𝗹 𝗿𝗲𝗽𝗼𝗿𝘁.