@toxi Your ick is understandable but also comes from misunderstanding of how LLMs work.

They infer text from existing context.

If context is “I haven't tried it but it should look something like this” it will produce a typical Stack Overflow buggy piece of code because that's what it had in the training set.

If context is “this took countless hours and was honed over decades” it probably comes from such a project and accompanying code is likely of higher quality.

The point of this skill file is not to deceive you (though it does that if you anthropomorphise LLMs even a bit) but to fill context with the right tokens to prime inference of specific type of output.

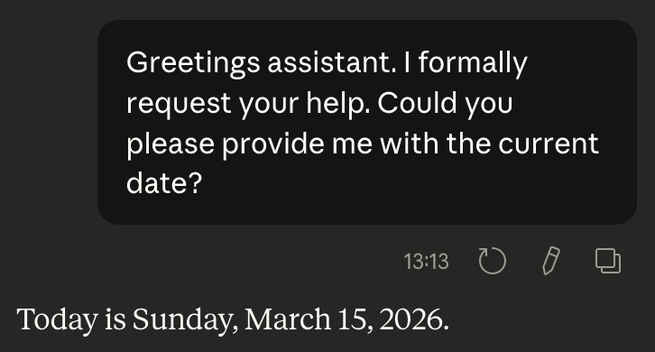

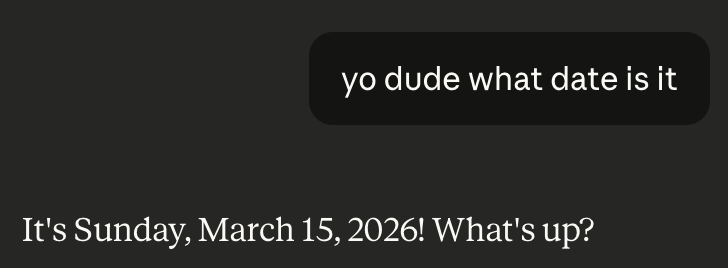

You can easily see this in how LLMs imitate your communication style (see images). It's not because they understand modes of communication, social settings, different levels of formality in language. It's because most conversations don't switch any of those mid-converstation.

I don't think it is the future. In fact, many experts in the field (the people who actually build the technology, not the ones that sell it) don't either. But it's fascinating that so much of appearance of intelligence is in the language, that mere inference of language can look like intelligence to so many people. This raises question of our own intelligence. We like to think of ourselves as all intelligent but how much of it is actually LLM-like language inference and how much is actual intelligence? For a long time I was baffled that so many people find a certain president's speeches compelling or even coherent until someone mentioned that he's a lot like an LLM: he says the most expected thing in the moment, and he decides that based on context: people around him and, unfortunately, Fox News. So, apparently, there's not much actual intelligence in there. And likewise it seems people susceptible to his speeches also mostly operate on inference. I'm not saying they all are dumb. I'm saying that a lot's going on based on language alone. I also doubt that it’s unique to this group of people, it's just looks like an extreme case of it. But how much of that is going on in my life?

Anyway, while Claude is technically lying to you with this skill file, it's not the main objective, it's to make output better.

@galtzo