Today's threads (a thread)

Inside: Three more AI psychoses; and more!

Archived at: https://pluralistic.net/2026/03/12/normal-technology/

1/

Today's threads (a thread)

Inside: Three more AI psychoses; and more!

Archived at: https://pluralistic.net/2026/03/12/normal-technology/

1/

Hey look at this

* E is for.... Enshittification https://www.evanshunt.com/enshittification/

* Calicornication: Postcards of Giant Produce (1909) https://publicdomainreview.org/collection/giant-produce-postcards/

* Organized Money: Why Your Lamp Sucks https://prospect.org/2026/03/11/organized-money-lamps-lighting-mid-century-modeline-history/

* The Live Nation settlement has industry insiders baffled https://www.theverge.com/policy/893272/live-nation-ticketmaster-doj-settlement-states

* Public speakerphone use is officially out of control https://arstechnica.com/culture/2026/03/explain-it-like-im-5-why-is-everyone-on-speakerphone-in-public/

3/

#15yrsago Notorious financier gets a “super-injunction” prohibiting the press from revealing that he is a banker https://www.telegraph.co.uk/finance/newsbysector/banksandfinance/8373535/Sir-Fred-Goodwin-former-RBS-chief-obtains-super-injunction.html

#10yrsago Shortly after her death, Harper Lee’s heirs kill cheap paperback edition of To Kill a Mockingbird https://newrepublic.com/article/131400/mass-market-edition-kill-mockingbird-dead

#10yrsago Web security company breached, client list (including KKK) dumped, hackers mock inept security https://arstechnica.com/information-technology/2016/03/after-an-easy-breach-hackers-leave-tips-when-running-a-security-company/

4/

#10yrsago Microsoft spams corporate users with messages denigrating their IT departments https://web.archive.org/web/20160309195537/https://www.infoworld.com/article/3042397/microsoft-windows/admins-beware-domain-attached-pcs-are-sprouting-get-windows-10-ads.html

#10yrsago Cycle and Recycle: gorgeous photos of the European recycling process https://www.wired.com/2016/03/paul-bulteel-cycle-recyle-europe-recycles-tons-of-waste-and-its-pretty-gorgeous/

#10yrsago Fellowships for “Robin Hood” hackers to help poor people get access to the law https://web.archive.org/web/20160304221459/https://labs.robinhood.org/fellowship/

#10yrsago 3D printed battle-armor for cats https://web.archive.org/web/20160311224139/http://sinkhacks.com/making-3d-printed-cat-armor/

5/

#10yrsago Great moments in the history of black science fiction https://web.archive.org/web/20160308034421/http://www.fantasticstoriesoftheimagination.com/a-crash-course-in-the-history-of-black-science-fiction/

#1yrago Daniel Pinkwater's "Jules, Penny and the Rooster" https://pluralistic.net/2025/03/11/klong-you-are-a-pickle-2/#martian-space-potato

6/

Yesterday's threads: AI "journalists" prove that media bosses don't give a shit; and more!

https://mamot.fr/@pluralistic/116212586418986591

7/

My latest novel is "Picks and Shovels," a historical technothriller set in the Weird Era of the PC, about Ponzi schemes, techbros, and the dawn of enshittification:

https://us.macmillan.com/books/9781250865908/picksandshovels

--

My latest nonfiction book is the internationally bestselling "Enshittification: Why Everything Suddenly Got Worse and What to Do About It," from MCD/Farrar, Straus and Giroux:

https://us.macmillan.com/books/9780374619329/enshittification/

8/

My ebooks and audiobooks (from FSGxMCD, Tor Books, Head of Zeus, McSweeneys, Beacon, Verso and others) are for sale all over the net, but I sell 'em too, and when you buy 'em from me, I earn twice as much and you get books with no DRM and no license "agreements."

9/

Upcoming appearances:

* #Barcelona: Enshittification with Simona Levi/Xnet (Llibreria Finestres), Mar 20

https://www.llibreriafinestres.com/evento/cory-doctorow/

* #Berkeley: Bioneers keynote, Mar 27

https://conference.bioneers.org/

* #Montreal: Bronfman Lecture (McGill) Apr 10

https://www.eventbrite.ca/e/artificial-intelligence-the-ultimate-disrupter-tickets-1982706623885

* #London: Resisting Big Tech Empires (LSBU)

https://www.tickettailor.com/events/globaljusticenow/2042691

10/

Upcoming appearances (cont'd):

* #Berlin: Re:publica, May 18-20

https://re-publica.com/de/news/rp26-sprecher-cory-doctorow

* #Berlin: Enshittification at Otherland Books, May 19

https://www.otherland-berlin.de/de/event-details/cory-doctorow.html

* #HayOnWye: HowTheLightGetsIn, May 22-25

https://howthelightgetsin.org/festivals/hay/big-ideas-2

11/

Recent appearances:

* Launch for Cindy's Cohn's "Privacy's Defender" (City Lights)

https://www.youtube.com/watch?v=WuVCm2PUalU

* Chicken Mating Harnesses (This Week in Tech)

https://twit.tv/shows/this-week-in-tech/episodes/1074

* The Virtual Jewel Box (U Utah)

https://tanner.utah.edu/podcast/enshittification-cory-doctorow-matthew-potolsky/

* Tanner Humanities Lecture (U Utah)

https://www.youtube.com/watch?v=i6Yf1nSyekI

* The Lost Cause

https://streets.mn/2026/03/02/book-club-the-lost-cause/

12/

You can follow these posts as a daily blog at pluralistic.net: no ads, trackers, or data-collection!

Here's today's edition: https://pluralistic.net/2026/03/12/normal-technology/

--

If you prefer a newsletter, subscribe to the plura-list, which is ad-/tracker-free, and is utterly unadorned save a single daily emoji. Today's is "🦏". Suggestions solicited for future emojis!

--

You can also get a fulltext RSS feed, licensed CC BY 4.0:

13/

I'm also on Bluesky. Read today's thread there at:

https://bsky.app/profile/doctorow.pluralistic.net/post/3mgvwg3ww6k2k

eof/

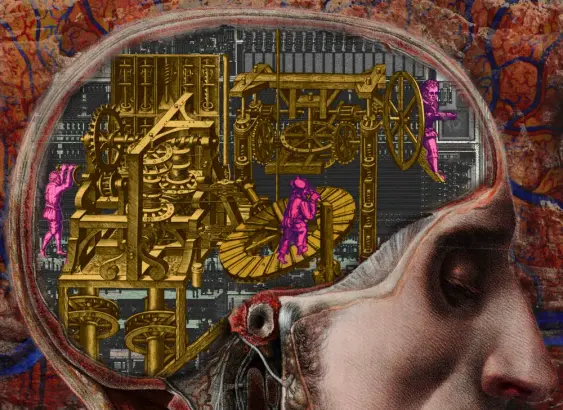

"AI psychosis" is one of those terms that is incredibly useful and also almost certainly going to be deprecated in smart circles in short order because it is: a) useful; b) easily colloquialized to describe related phenomena; and c) adjacent to medical issues. 1/

> There's nothing about AI per se that makes it exceptionally

There is: power, as in dE/dt. I can't think of any other technology in the last 100 years, that used so much energy in so little time to do almost nothing usable. Mostly energy efficiency was improving.

I can't think of any other technology that would by so half-baked when turned into a product. 👉