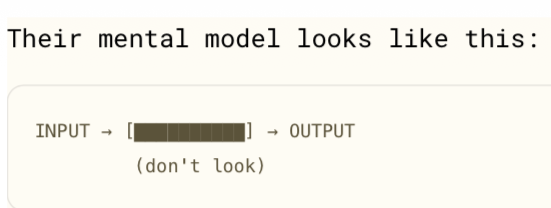

Observing a debug/troubleshooting session of probabilistic system with deterministic mindset/mental model is fascinating.

Main question being asked is: “For exact same identical input text, why is response NOT exact same identical output?”

As a Content Designer, 7 years ago, this “conundrum” exploded my existing mental models & introduced concept+UX challenge of “producing variable content, probabilistically, personalised to individual, in a specific runtime system configuration & situation.

Main question being asked is: “For exact same identical input text, why is response NOT exact same identical output?”

As a Content Designer, 7 years ago, this “conundrum” exploded my existing mental models & introduced concept+UX challenge of “producing variable content, probabilistically, personalised to individual, in a specific runtime system configuration & situation.