Doubling down on my point from yesterday that we don't care enough about proper OOM management.

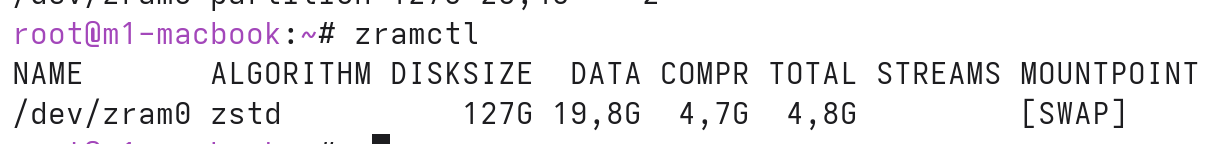

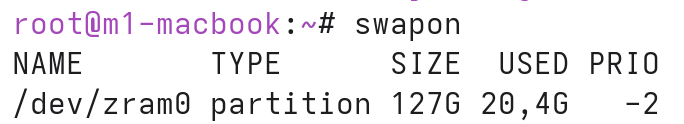

I just played around with a giant zram device just see if we can make way more use of compressed memory.

Turns out yes, suddenly my Firefox on Linux holds 250 tabs in memory without any disk-backed swap.

Kinda makes the point that having a fixed-size zram device is a bad idea, and the kernel should just compress as much memory as possible?