One of my early roles at WaPo was as an editorial aide in the Editorial section, where part of my job involved reading all the Letters to the Editor and separating the crazies from those might possibly have a salient point to make.

30 years later (gulp), I am still getting plenty of Letters to the Editor, but they are not what they used to be. Time was, they were mostly people convinced their lives were being turned upside down and inside out by nebulous hackers, the govt, their ex, etc. Back in WaPo days, the common thread from the crazies was that their tormentors were using radio signals or somesuch to track and harass them.

These days, however, the "they're all after me" pleas are getting drowned out by inquiries from people who have clearly delved too deep down the AI chatbot rabbit hole. To the point where they're trying to convince everyone that nefarious, AI-based actors are harassing them, or that benevolent sentient beings reside within.

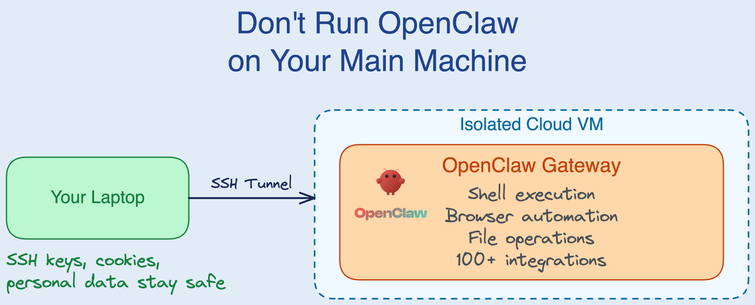

The thing is, the sentient being claim aside, it is actually stupid easy with today's hot new agentic AI toys for people to make their worst nightmares come true -- including having all their stuff taken over by a machine that most definitely does not have their best interests at heart.