In short, I better not EVER have a picture anywhere on anything I self-host I have not explicitly acquired the rights to, or have made myself.

There is a whole gray market in Germany for suing the people for that. Fixing this next.

#asca #server

#mastodon #gdpr

Adding these lines to my application.env covered this issue

# do not fetch external resources

DISABLE_FETCH_PREVIEWS=true

DISABLE_EMBED_PREVIEW=true

I will need to go through my own feed next to ensure that there are no embeds left over. This is in addition to my recent purging of boosts: https://mastodon.mariobreskic.de/@mario/114982417840616548

Mario Breskic (@[email protected])

Had to nuke all my boosts 💥 — not because I don’t love you all, but because German copyright law said “No fun, only §!” 🇩🇪📚 Turns out even displaying book covers (aka “framing”) can get dicey. Courts here ruled that embedding images without permission = potential copyright infringement. No thanks 😅 Details if you love legal pain: https://www.kanzlei.biz/suche/?sq=framing&type= Stay safe, stay boostless! 💂♂️🔗

And from there, I delete all #PreviewCards in #mastodon by using these commands:

1. docker compose exec web bash

2. RAILS_ENV=production bundle exec rails console

And then I run this in the console: PreviewCard.destroy_all

This will run for a while but it should remove all stored preview cards from my mastodon server, for all posts.

And it worked. Just climb back up to my prompt by punching in “exit” until I am back home, and that issue has been fixed as well. #server #asca #mastodon #gdpr

Running a solo Mastodon instance? Here's how to keep it clean and #gdpr compliant:

– Disable link previews: DISABLE_FETCH_PREVIEWS=true

– Disable embeds: DISABLE_EMBED_PREVIEW=true

– Purge old PreviewCards via Rails console

– Avoid federated content

– Self-author everything

– Host locally, anonymize IPs

Respectful publishing, no third-party hosting

#asca #server #mastodon #selfhosted #germany

Correction, see #aside here https://mastodon.mariobreskic.de/@mario/115050605879471525

#aside and #tldr

Running a solo Mastodon instance? Here's how to keep it clean and #gdpr compliant:

– Disable link previews: DISABLE_FETCH_PREVIEWS=true

– Purge old PreviewCards via Rails console

– Avoid federated content

– Self-author everything

– Host locally, anonymize IPs

Respectful publishing, no third-party hosting

#asca #server #mastodon #selfhosted #germany

Mario Breskic (@[email protected])

#aside: the only line that is currently accurate and therefore needed is DISABLE_FETCH_PREVIEWS=true this change supersedes the previous setting, and I will take note of that in the tldr below. #server #asca #mastodon

There seems to be a bug implementing this in #mastodon #v4.4.3, so here is the workaround for disabling fetch previews by deleting preview_cards from the DB:

DELETE FROM preview_cards;

And blocking future inserts:

CREATE OR REPLACE FUNCTION block_preview_card_insert()

RETURNS trigger AS $$

BEGIN

-- Ignore insert

RETURN NULL;

END;

$$ LANGUAGE plpgsql;

CREATE TRIGGER prevent_preview_card_insert

BEFORE INSERT ON preview_cards

FOR EACH ROW

EXECUTE FUNCTION block_preview_card_insert();

Undoing the previous changes inside the db container, due to having found a more promisingly elegant solution:

DROP TRIGGER IF EXISTS prevent_preview_card_insert ON preview_cards;

DROP FUNCTION IF EXISTS block_preview_card_insert();

Fix:

adding this to my yaml file for my sidekiq and my web container:

command: >

bash -c "bundle exec rails runner 'class FetchLinkCardService; def call(*args); nil; end; end' &&

bundle exec puma -C config/puma.rb"

and sidekiq

command: >

bash -c "bundle exec rails runner 'class FetchLinkCardService; def call(*args); nil; end; end' &&

bundle exec sidekiq"

Filed a bug: https://github.com/mastodon/mastodon/issues/35813

Which is also a test for the patch ;)

DISABLE_FETCH_PREVIEWS=true is being ignored in v4.4.3 in docker · Issue #35813 · mastodon/mastodon

Steps to reproduce the problem the environmental setting DISABLE_FETCH_PREVIEWS=true to .env or .yaml file should turn off the creation of PreviewCards for links in posts restarting the containers ...

I need another test, as a sanity check:

#PreviewCards are still being created, despite the patches.

Changed the #sidekiq patch to target the LinkCrawlWorker like so:

command: >

bash -c "bundle exec rails runner 'class FetchLinkCardService; def call(*args); nil; end; end' &&

bundle exec sidekiq"

And another one:

And brute force I will now, since the Worker insists on working.

Inside the pgsql container:

CREATE OR REPLACE FUNCTION block_preview_card_insert()

RETURNS trigger AS $$

BEGIN

RAISE NOTICE 'PreviewCard insert blocked by DB trigger.';

RETURN NULL;

END;

$$ LANGUAGE plpgsql;

CREATE TRIGGER prevent_preview_card_insert

BEFORE INSERT ON preview_cards

FOR EACH ROW

EXECUTE FUNCTION block_preview_card_insert();

And another test, which I hope completes this issue for me:

https://www.domestika.org/es/courses/1457-home-office-trabajar-desde-casa-con-exito

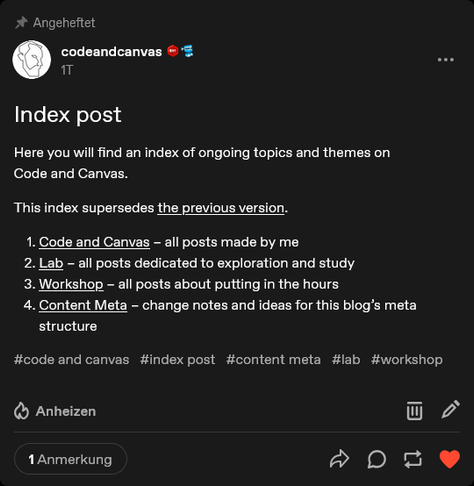

Rebuilt the index post/persistent context “tool” for tumblr because threading there should not be done with reblogs.

Instead, I used a system I came up with in 2019, on Twitter: make a post containing a link I want to hook up to the persistent context scaffolding.

On tumblr, this means: make a post, then link to that post from its category/theme master post.

https://www.tumblr.com/codeandcanvas/792786451752468480/index-post #asca

The thread is then visually prepared by tumblr natively, rather than me forcing it.

This seems fine to me. #asca

Will have to update my #linkwarden incrementally from v2.11.5 to v2.12.2.

I use linkwarden to store links from my blog articles via a custom wordpress plugin which I have hacked together using #chatgpt #asca

I am sure that doing the update incrementally makes more sense than jumping a few versions in between.

Restarted my side project of bringing the @medienfeed service to the fediverse.

A free info service about design and media for the #DACH region, which already runs well on other websites.

Played around with making #huginn act like a buffer for the posts, using a Scheduler Agent and a Delay Agent for the scheduling.

Added a truncate filter to the Formatting Agent so the process doesn’t get denied by exceeding xxx amount of characters.

Unter uns, was sich Firmen in puncto Nutzern einbilden, ist entsetzlich.

ChatGPT meinte neulich dazu:

‚If you participate meaningfully, you are infrastructure.

And infrastructure doesn’t get to say “nah.”‘

L2 (ausgeschrieben Lagrange-Punkt Zwei) unterscheidet sich von L1 ganz deutlich, und vor allem aber auch von von L4 und L5 und dem möchte ich gerecht werden.

Die Positionierung auf L1 erwies sich als Fehler und wird über Wochen korrigiert.

Ein Blick in meine IFTTT-Struktur und ich sehe, dass der Ping auch an diese Mastodon-Instanz hier gehen, was gut ist, denn da hängt sofort der ganze Rattenschwanz an vermischten Identitäten mit dran, also nicht nur die Migration von L1 nach Zwo, sondern auch die Loslösung LZwos von derartigen Durchreichungen.

Den Zugangspunkt/Webhook zu Mastodon behalte ich aber bis auf weiteres. L2 wird von Automatisierungen gelöst?

Ich finde die Grundlage des Lagrange-Punktes 4 dabei reizvoll: nicht nur handelt es sich hierbei um Objekte, die unter einem viel stärkeren Einfluss von Massen stehen, die ich selbst nicht beeinflussen kann

(Social-Media-Websites sind im Allgemeinen nicht stabil, die Accounts, die man dort hat, können durch Trends, Performanz und Cliquen sehr schnell verschwinden, dem Tribalismus ist das zu schulden),

man lebt auch schlicht nicht auf Trojanern. Aber man kann Sonden dort absetzen. Das geht.

Ich überarbeite mein Modell: Social Media sind nicht L4 oder L5, sondern astronomische Zentauren.

Instabil.

Ich bemerke, dass die Lösung in DNS-Filtern zu bestehen scheint, und in eiserner Selbstdisziplin diese Filter nicht ständig aus- und wieder anzumachen.

Dass der Browser mehr ist als ein Darstellungsfenster, muss wieder zurück in meinen Kopf.

Versuche gerade meine Bookmarks von wallabag zu Linkwarden zu migrieren. Die Frage ist, ob Datengräber wie Linksammlungen nicht doch einfach nur nach einer Variante der Pareto-Regel für Daten funktionieren: 80% des Speicherplatzes sind immer von den Sachen belegt, die man nur in 20% der Fälle benötigt.

Aber gut. Irgendwo steht eine Notiz, dass der Bibliothekar (und auch der sogenannte „Hoarder“, also ein kompulsiver Sammler) im Mensch 2026 sublimiert wurden.

Manche Software ist einfach genau richtig so wie sie ist.

Gut, dass ich in der Zwischenzeit nichts gelöscht habe, puh. Manchmal geht mein Enthusiasmus für Neues mit mir durch.

keine Altlasten in der Form von Tags mit Ober- und Unterkategorien mehr, sondern nur die Literatur und ihre Verschärfung als vertiefende Literatur. So ganz langsam scheine ich mir einen Begriff jenseits von Ordnerstrukturen machen zu können.

20 Minuten und nicht mehr:

https://codeandcanvas.tumblr.com/post/809600945961025536/20-minuten-am-tag-f%C3%BCr-social-media

Ich richte auf jeden Fall meine Feed-Reader nochmal neu ein, für den neuen Freshrss-Server, der nur für mich selbst ist. Ich mag sowas: die eigene Welt wirklich mit sich herumzutragen, aber auch in dieser zu leben und zu agieren.

Die Zeit, die das für mich und immer freiräumen wird!

Unter uns, ich glaube, dass die Erfahrung des Defragmentierens bei ein paar Leuten den bleibenden Eindruck hinterließ, dass der PC sich in einem fortlaufend defekten Zustand befinde, den man selbst reparieren müsse.

Was für eine Zeitverschwendung!

Schließlich bin ich selbst die Ursache, dass ich das jetzt mache.

Ich habe mein Paradigma für Datensicherheit weiterentwickelt: weg von der Cloud, hin zu lokalen Prüfsummen der Dateien. Außerdem teile ich meine Daten jetzt in Hot- und Cold-Storage, was lediglich eine zusätzliche externe Festplatte nötig machte.

Und auch ich bin hier keine Ausnahme: wenn man genug weiß, bemerkt man, dass es am Ende keine Rolle gespielt hat, außer eine Art Selbstbeschäftigung gewesen zu sein.

Zurück zum authentischen Handeln.