@agasramirez

@agasramirez @Lazarou To be fair this argument can be valid to some extent.

Though I feel most of "AI" is overhyped and will just end up locking down users/companies into big tech providing inference for them...

@agasramirez @Lazarou If everybody should be using AI, it seems weird that he suggests meeting one-on-one to discuss it.

I recently went to a Microsoft seminar for work. They were full-on “everything is AI now” which I had no interest in. So I found the only breakout sessions WITHOUT AI.

What I found was a much smaller group of people attending and the people leading the sessions actually knew WTF they were doing and I got a lot of one-on-one time with the experts. 👍

@Lazarou

I wish they'd stop calling it "#AI".

It isn't. Not even close. I went to college to study #AI decades ago.

What they are calling "AI" today is nothing more than "deep database scrubbing".

It *assumes* an answer is correct based simply on the number of results it finds supporting that conclusion.

#GIGO: Garbage In; Garbage Out.

@MugsysRapSheet @Lazarou AI has always been a marketing term. What was "machine learning" back when you were probably studying bares little resemblance to Large Language models, but it all get lumped in the same bucket.

Even when criticizing it, we're encouraged to use terms like "hallucinations" that anthropomorphise the systems, instead of using more correct terms like "statistical error".

Yep, now I see any app that boasts "powered by AI" I have to assume it's using an LLM and stay away from it.

@Lazarou I don't like that google keeps using AI to answer my questions, but I have not bothered to look elsewhere. BUT I run several aquariums since covid and one suddenly turned cloudy and green so I asked and was told, "The Water is too Fat"

oh well that solves everything so I get it. That is frightening.

So … what’s the problem? It functions exactly as designed!

I know this probably makes me a terrible person but I honestly can't _wait_ for the lawsuits.

Added bonus laughs if they use AI to write their legal briefs.

EPIC.

seems like today double checking is some kind of failure 😅

@Lazarou I have my doubts about this post.

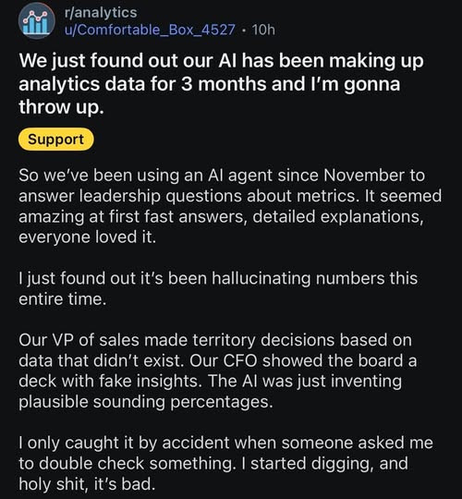

I do not doubt that AI can and will produce fake insights. A very easy way to get that is to ask an AI questions whose answers are not in the numbers you’re feeding it. Or your questions are heavily biased towards things you want to hear („Show me how campaign X increased sale“).

But unless you’re completely ignorant of your own business, you will notice it quickly. Especially if it goes on for 3 months. I deal with a lot of managers and every single one of them looks through raw data. Every system (with or without AI) is faulty and they fine comb every bit that influences their salary relevant metrics.

@Lazarou I don't get how LLMs are needed for analytics? This field is basically about counting numbers, statistics magic and visualization. Isn't it?

I mean, asking an LLM what kind of statistical sorcery would be needed to measure this and that and ask it for a small python script will probably work (if you double check everything). But what did they do, to come to a situation where an LLM makes up data for months? 😬

People love simple interfaces. People have given up good coffee and good music in favor of lower-quality but single-button-push services.

Asking the LLM is easier than doing the work, even routine work you know how to do.

If the results aren't part of an immediate feedback loop, it could take a while for the results to stop *looking* plausible, or for reality to smack them on the forehead.

Wonder if Trump uses AI to generate statistics about the US economy 🤔

@Lazarou IF true lol this is what this company gets. But this is Reddit. This smells like that one post about the food delivery who claimed to have uncovered some massive details of fraud. Turns out that whole post was made by AI.

3 months? Relying on AI for something as critical sounding as this? Hmmm lol. I hope it ain’t true lol 😆