I should post about the latest #retrocomputing project I started.

Problem: I'd like an open-source, self-hosting C compiler on 8086, that supports the large memory model, overlays, and enough C89 to build Lua.

This seems to not exist! K&R is much more common in this size category. Around the time of C89, many compilers bloated to the point of requiring a 386 or better host, though they could still target 8086. The 8086 holdouts were, in general, commercial products that never got a source release.

One notable exception was DeSmet C http://www.desmet-c.com. It seems to have started life as a commercial PC fork of Bell Labs PCC, a small and sturdy K&R compiler. (Edit: I'm no longer sure of this: DeSmet had a shareware release as "PCC", but this stood for Personal C Compiler, while Bell's stood for Portable C Compiler. So the similarity in naming was probably just a coincidence.) DeSmet 3.1 added "draft ANSI C" support, but this is incomplete, and riddled with code-gen bugs. This version later found itself on Github as OpenDC https://github.com/the-grue/OpenDC.

Aside from all the bugs, this is a pretty cool package: its dis/assembler, debugger, text editor, and some other utilities were also open sourced, and it runs on an 8088 with 256K RAM and two 360K floppies.

The OpenDC person did a good job packaging things up into an easily buildable form, and fixing syntax errors that probably came from running the sources through a different compiler version than expected, so... yes, it does indeed build and self-host... and I've done this on my Book 8088.

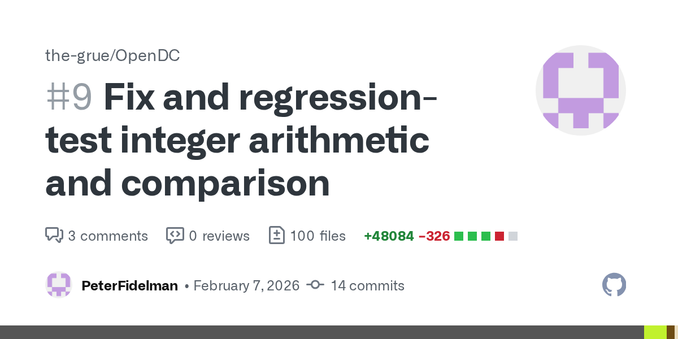

So now I will try to fix the bugs and add the missing C89 features. There are many, many of both... gulp.

It's great seeing ongoing interest in on-target 8086 development, as opposed to just cross builds.

It's great seeing ongoing interest in on-target 8086 development, as opposed to just cross builds.