@hazelweakly

In the more general case, I think the only answer is a combination of liability and public funding. If companies start to be liable for direct customer damages, the incentive structure for building systems changes a lot. Then, public funding that treats open source software as a public good and pays for maintenance and audits of critical tools, along with specific funding of tooling to make it easier to build secure systems, including, as stuff reaches some level of community acceptance, funding to move existing systems over to the new tooling.

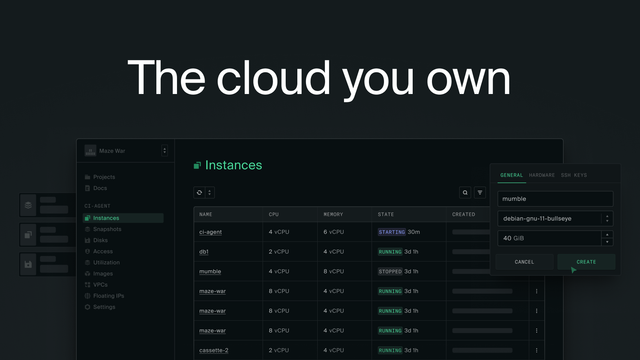

We see little baby efforts from the EU for this, but relatively little on the ops-ish side. The hyperscalers have shaped the way we think about operating systems, but the public products they provide are designed with making money as a higher priority than making it easier to operate secure systems — and they've starved the options for folks not operating in their clouds, because raising the bar to going on prem is a core part of their business model. There's a huge opportunity for public funding to change that, especially in the light of the digital sovereignty conversation.

In the end though, there's no way to do this without companies accepting that they're not going to be able to write as much code. But if your business model only works because you're polluting the world with negative security outcomes that you treat as an externality, then your business model shouldn't work.