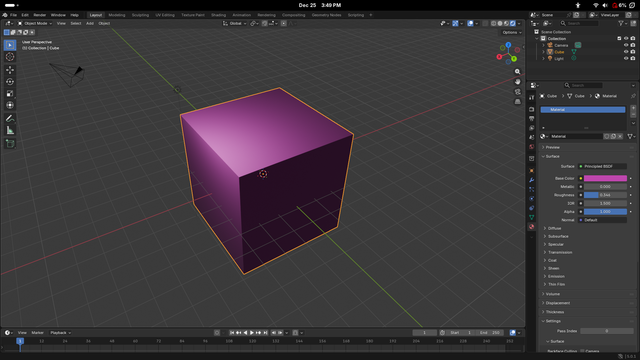

uhm, did you know that waypipe with ssh is fast enough to use blender remotely over wi-fi? what? this works much better/faster than x11 forwarding ever did

this works much better/faster than x11 forwarding ever didi think this is cause waypipe uses h264 whereas x11 forwarding forwards the draw instructions which in the modern day is just "draw this 4k 32-bit colour pixmap" which isn't very efficient over the network

@tauon @mntmn @dotstdy Both could work just fine with X in theory. The GLX extension - a long time in the past - could do remote 3D rendering, but pixel shuffling over X could also work fine. X is a very generic and flexible remote buffer handling protocol. The issues with ssh -X are mostly latency related because toolkits (and blender if not using a standard one then has one builtin) use it synchronously instead of asynchronously.

@dotstdy @tauon @mntmn But I think even for many 3D applications that render locally, a remote rendering protocol is actually the right thing, because for all intents and purposes a discrete GPU is *not* local to the CPU and whether you stream the commands via PCI or the network is not so different. In fact, Wayland is also a designed for remote rendering in this sense just in a much more limited way.

@uecker @tauon @mntmn Unfortunately that's really not how the GPU works at all in the present day, it made more sense back in OpenGL 1.1 when there were pretty straightforward sets of "commands" and limited amounts of data passing between the GPU and the CPU. Nowadays with things like bindless textures and gpu-driven rendering, and compute, practically every draw call can access practically all the data on the GPU, and the CPU can write arbitrary data directly to GPU VRAM at any time.

@uecker @tauon @mntmn For very simple GPU programs you can make it work, but more advanced programs just do not work under a model with such restricted bandwidth between the GPU and the CPU. Plus, as was mentioned up-thread, you still need to somehow compress and decompress those textures online, which is itself a complex task. Plus you still need the GPU power on the thin client to render it. It's very much easier to render on the host, and then compress and transfer the whole framebuffer.

@uecker @tauon @mntmn we keep gigabytes of constantly changing data in GPU memory. so yes, but unless you want to stream 10GB of data before you render your first frame, then no. (obviously blender is less extreme here, but cad applications still deal with tremendous amounts of geometry, to say nothing of the online interactive path tracing and whatnot)

@dotstdy @tauon @mntmn We found it critically important to treat the GPU as "remote" in the sense that we keep all hot data on the GPU, keep the GPU processing pipelines full, and hide latency of data transfer for the GPU. I am sure it is similar for you. But I can see that in gaming you may want to render closer to the CPU than to the screen. But this does not seem to change the fact that GPU is "remote", or?

@uecker @tauon @mntmn Similar, but likely at a narrower scale of latency tolerance. The issue is just the bandwidth v.s. the size of the working set, the GPU is remote (well, unless it's integrated) but PCIe 4 bandwidth is ~300 times greater than you get with a dedicated gigabit link. and vaguely ~15000 times greater than what you might have used to stream a compressed video.

@dotstdy @tauon @mntmn Yes, this makes sense and I am not disagreeing with any of it. But my point is merely that a display protocol that treats the GPU as remote is not fundamentally flawed as some people claim, because the GPU *is* remote even when local. And I could imagine that for some applications such as CAD, remote rendering might still could make sense. We use remote GPU for real-time processing of imaging data, and the network adds negligible latency.

@uecker @tauon @mntmn The reason it's flawed imo is that while it will work fine in restricted situations, it won't work in many others. Comparatively, streaming the output always works (modulo latency and quality), and you have a nice dial to adjust how bandwidth and CPU heavy you want to be (and thus latency and quality). If you stream the command stream you *must* stream all the data before rendering a frame, and you likely need to stream some of it without any lossy compression at all.

@dotstdy @tauon @mntmn The command stream is streamed anyway (in some sense). I do not understand your comment about the data. You also want this to be in GPU memory at the time it is accessed. Of course, you do not want to serialize your data through a network protocol, but in X when rendering locally, this is also not done. The point is that you need a protocol for manipulating remote buffers without involving the CPU. This works with X and Wayland and is also what we do (manually) in compute

@uecker @tauon @mntmn I'm not sure I even understand what you're saying in that case, waypipe does just stream the rendered buffer from the host to a remote client. It just doesn't serialize and send the GPU commands required to render that buffer on the remote client. The latter is very hard, the former is very practical.

@tauon @mntmn @uecker Let me put it this way, when I render frame 1 of a GPU program, in order to execute the GPU commands to produce that frame, I need to have the *entire up to date contents of vram* in the 'client' gpu's vram (or at least memory accessible to the 'client' gpu). That's really hard, and is bounded only by the GPU memory that the application wants to use. But using the 'server' gpu to render a frame, and sending it to the remote, is a bounded, and much smaller amount of work.

@tauon @mntmn @uecker If I have a tool like blender which might have GPU memory requirements of say, 10GB, for a particular scene. Then in order to remote that very first frame, I need to send those entire 10GB all to the client! And then every frame the data in the GPU working set changes, and all that data needs to be sent as well.

@dotstdy @tauon @mntmn I think we may be talking past each other. I am not arguing for remote rendering (although I do something like this, but this is more compute than rendering), but my point is that GPU programming *is* programming a remote device because the PCI bus - although less than a network - is a bottleneck and this is why both X and Wayland a remote buffer management protocols and not fundamentally different in this respect.

@uecker @tauon @mntmn Right, I think I see your meaning now. I was just confused because X (as well as some other products) did of course allow you to send actual rendering commands to the remote machine, for them to be executed on the remote rather than the host. And tauon was talking specifically about forwarding gl commands. But if you just consider the direct rendering paths, then they're pretty similar between something like X and waypipe, it's just copying and forwarding the output frames.

@uecker @tauon @mntmn nitpick aside: The reason that these are remote buffer management protocols is actually subtly different from bandwidth concerns, it's more about queuing and throughput. the latency between the GPU and the CPU is actually pretty low, and the bandwidth quite high, however we deliberately introduce extra latency in order to allow the GPU to run out-of-lockstep from the CPU to increase throughput. It prevents us from starving the GPU, by ensuring a continuous stream of work.

@uecker @tauon @mntmn So when you communicate, the reason that's bad is partially due to the bandwidth, but mostly due to the huge delays you incur in order to wait for execution to complete on the GPU, and then to start back up again once you produce more GPU commands. Readback at a fixed latency which exceeds that queuing delay is totally fine, and bandwidth-wise you can yeet something like 20 4k uncompressed images across the pcie link per 60Hz frame, if you really really wanted to.

θΔ ⋐ & ∞

θΔ ⋐ & ∞