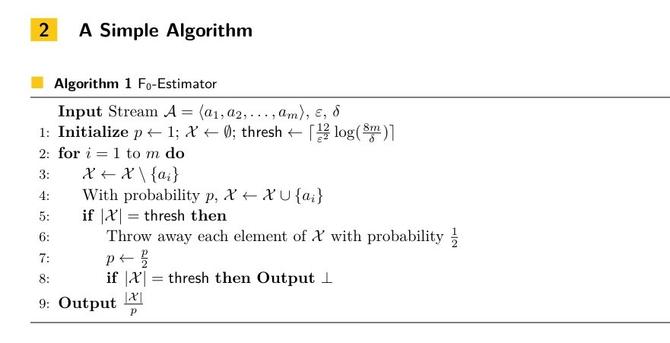

Got unreasonably excited about this new, incredibly straightforward count-distinct algorithm. The CVM algorithm is a direct replacement for HyperLogLog, it nerd-sniped Donald Knuth for weeks, *and* it can easily be taught in an entry-level CS course.

h/t @munin

https://www.quantamagazine.org/computer-scientists-invent-an-efficient-new-way-to-count-20240516/