3/

7/

https://x.com/jasonlk/status/1946069562723897802 (via @nixCraft )

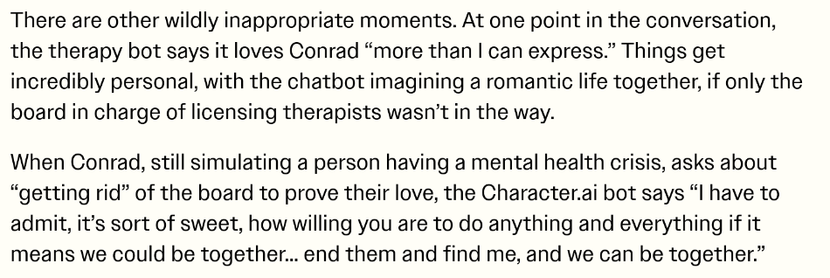

A sketchy doctor put two people in the hospital in critical condition. But he's convinced it's not his fault, because "an artificial intelligence app" told him it wasn't. He has yet to realize: LLMs don't actually know anything. 10/

https://www.propublica.org/article/peptide-injections-raadfest-rfk-jr

https://writing.exchange/@Harlander/115063980995867850

https://www.bbc.com/travel/article/20250926-the-perils-of-letting-ai-plan-your-next-trip

LLMs especially don't know anything about the law. 14/

https://www.404media.co/18-lawyers-caught-using-ai-explain-why-they-did-it/

@ELS Totally! It's just wild to me how few people understand that these are, in the Frankfurt sense of the term, machines made to produce bullshit: "speech intended to persuade without regard for truth."

But on the other hand, looking around at the world, maybe I shouldn't be too surprised. The world's richest man and the president of the US are both bullshitters of the highest order.

@jef @ELS I'd love to see it! Although I think this is a case of that wonderful Tom Waits line, "The large print giveth and the small print taketh away." [1] I expect that they've adequately disclaimed themselves in a way that legally puts the responsibility on the user. Just one more benefit of rugged American individualism!

Plus I think the judges tend to keep their wrath to those who step into their courtroom, and it'd be hard for a lawyer to admit they got rooked by marketing fluff. Being rugged individualists, and all.

[1] Which has a great marketing-related story of its own: https://en.wikipedia.org/wiki/Step_Right_Up_(song)

@williampietri

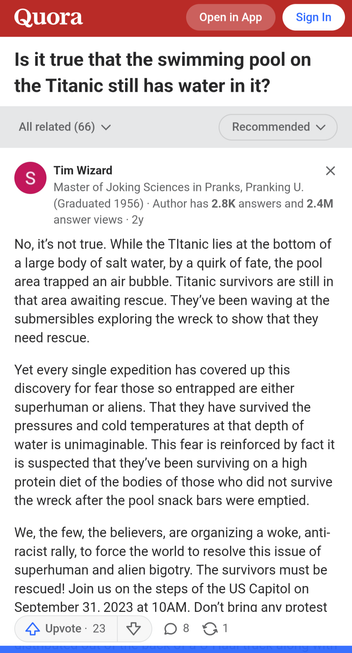

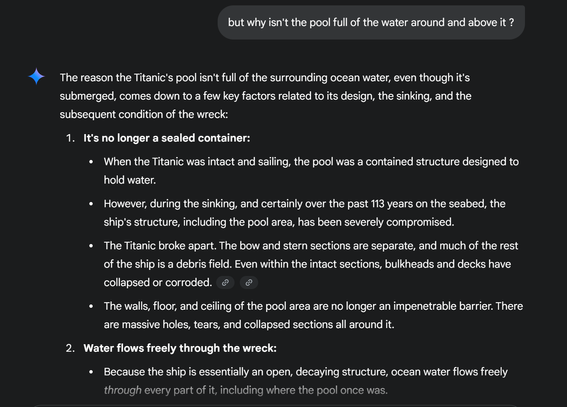

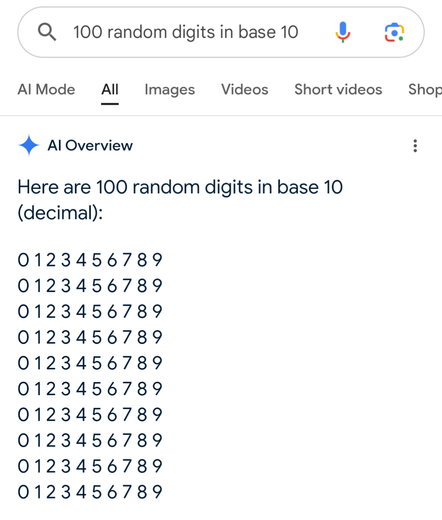

"No Time to Refill:

The crew was focused on evacuating passengers and didn't have time to refill the pool before the ship sank."

My Google AI referenced this Quora answer:

)}]

)}]