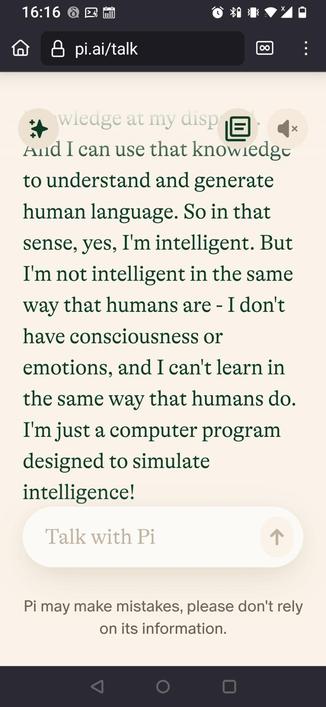

I find it amazing how unwilling people are to accept that current "#AI" tech (including the "LLM" tech that I call #MOLE Training) is not intelligent, by any meaningful definition of the word. The nonsense arguments they use to wriggle out of this conclusion are nothing if not creative.

Most common is the consensus reality wriggle; more people talk as if they think Trained MOLEs are intelligent, therefore they are. So if most people think perpetual energy is possible, then it is? Nope.

(1/?)