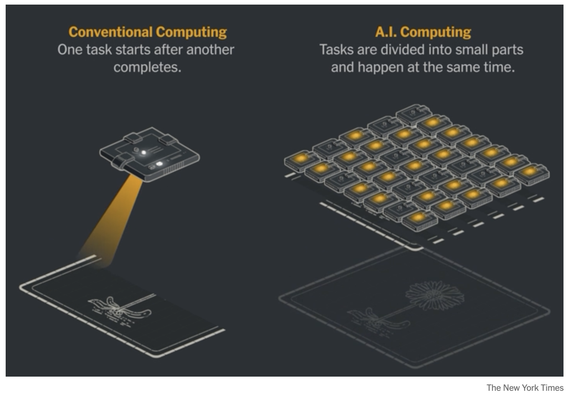

@mattblaze @ct_bergstrom I legit thought it was some misunderstanding of quantum computing at first, but then the title says "AI computing" and I got nothing.

(And to be clear, quantum computing also does not work like that)

@lain_7 @mattblaze @sophieschmieg @ct_bergstrom

For anybody not interested in graphics, Nvidia brands itself as an "AI Chip" company these days. GPU's are parallel. AI runs on GPU's. GPU's are "AI Chips" these days. So "AI compute" is compute running on parallel hardware.

This makes perfect sense as long as you never heard of "computation" before six months ago, and everything you know about computers is from vapid press releases hyping AI scams.

@wrosecrans @lain_7 @mattblaze @sophieschmieg @ct_bergstrom A lot of those chips are integrated GPU chips. It's both a regular CPU and a GPU. They can do a lot, but yeah AI is usually what they are used for.

The Jensen Orin is an example of this.

One of the ideas for that thing is to use AI to analyze brain waves and control your limbs if you've got some injury that severed something in your spine. You'd wear something like an Orin among other things.

@mattblaze @sophieschmieg @ct_bergstrom I _think_ they're comparing a single-threaded CPU to a modern GPU??? Which is nonsensical. We've had multiprocessing for decades.

As Pauli might have said, this is not only not right, it is not even wrong.

It's a case of "so wrong, even the opposite is not correct". F for failed the topic of the assignment.

ich habe Schmerzen, ziemliche.

Vor 40 Jahren habe ich 30 Jahre älteren Menschen erklärt wie ein Computer funktioniert. Und heute erkläre ich 30 Jahre jüngeren Menschen wie ein Computer funktioniert.

Oder irgendwie so

Digital naives, grmpfl

@echopapa Journalisten… 🙄

Die Zeit hat nie geendet, seit ich mit dem ganzen Digital-Krempel angefangen habe. Das Unwissen ist bei den Gleichaltrigen ist weiterhin erschreckend.

Und das hat damit zu tun, dass diejenigen, die Computer verstehen nicht etwa hochbezahlt in die Schulen geschickt wurden, um sie allen zu erklären.

Nein, sie wurden hochbezahlt in Unternehmen geschickt, um hinter einer Armee aus Sales, Marketing, Support und Anwälten die Computer für Dumme zu programmieren, und diese möglichst dumm zu halten.

Sorry, geht gleich wieder. Ich gehe jetzt wieder Leuten Computer erklären...

@ct_bergstrom

@ct_bergstrom Wasn't that invented in the 60's by Lynn Conway when she worked for IBM?

Parallel and out of sequence that was.

Before she was kicked out for being herself.

My God! The Ada language with its tasks and rendezvous system already provided for reliable and efficient parallel processing 45 years ago!

Everything needs to be AI nowadays, I guess.

Yes! I remember that one. Specifically because of those 64k 1-bit processors and a whopping 512Mb of internal memory.

At that time, I had a 64Kb BBC-B 8-bit computer. 🙈

At least as far back as 1922:

https://thatsmaths.com/2016/01/07/richardsons-fantastic-forecast-factory/

Weather prediction using a gigantic staff of human computers, each calculating the equations describing the weather system for their own region of the globe.

@causticmsngo

"The Gell-Mann amnesia effect is a cognitive bias describing the tendency of individuals to critically assess media reports in a domain they are knowledgeable about, yet continue to trust reporting in other areas despite recognizing similar potential inaccuracies."

It sure is real

Fedi has also compiled a helpful list of remedies, see for example:

@ct_bergstrom don't you mean "AI computing"??

(Did an "A" "I" draw this diagram??)

The Problem With Science Communication

@ct_bergstrom @xgranade wow, that's… like the opposite of what happens.

Traditional computing; multiple processors efficiently compute distinct and useful tasks in parallel, quickly delivering accurate results or effects

AI computing:

(Sound of lake being slurped into fiery crack in ground)

@ct_bergstrom so disappointing.

I had an undergrad software engineering course that was all about optimizing serially processed programs we were given by the professor to run on chips that made use of parallel processing. This was in the mid 90s.

There was a lot of loop unrolling and loop jamming and other parallel processing techniques that had been known for a long time. Our grade was based on how fast our final programs would run.

No AI, just a lot of analytics and tinkering.

(he/him)

(he/him) 🛡️

🛡️

Kura

Kura  :kura_explode:

:kura_explode: