@crazyeddie @mcfly the reason AI is bad is because the system it's being applied to is bad. LLMs work fine when attached to consistent systems of logic. Language is a system of logic, but colonial languages have been engineered to make space for ambiguity proportional to the amount of violence it takes to support the society. So the violence is what's consistent.

& So applying AI to that surfaces the violence embedded into language by colonialism. All the incorrect assumptions inserted into the pattern-finder, & the pattern it finds is racism, misogyny, etc. Because though truth with colonial languages can be tricky to communicate, violence is easy.

Banning guns, banning alcohol, banning weed… banning books we don’t like: it (always /s) works out so well…

You want to censor people: just say that. You can’t think of another way to handle the problem and you’d just want it to go away rather than deal with it.

There is a problem. But censorship is a tool of the repressive. The progressives best not pick up that tool. Censorship is fear based policy. Be braver in thought!

@mcfly Well, the reality is that with AI being integrated into everything - like it's coming to macOS and iPhone soon. Microsoft Copilot, AI search...

The masses WILL use it, which will justify its ongoing shoving-down-our-throats for big tech.

Sooooo yea, until things improve, I guess we're stuck with it.

I'll turn it off, uninstall and ignore it where I can and don't care for it - So pretty much everywhere for now. But I'm curious to see what Apple is doing with it.

@mcfly Most generative AI, sure. But lots of ML tools for transcription, translation, audio isolation, noise cancellation, coding boilerplate, text inference, etc. that are useful.

It's a shame those legitimate use cases are all ignored because everyone things "AI" equates to generative slop.

Yeah. Most people talk about "AI" as being this completely useless thing while asking chatgpt what is 7+9.

Don't get me wrong, most often "AI" is used for non-productive purposes and reasons ranging from mildly unethical to insanely (in?)humane. But shifting the blame from stupid/malevolent humans to AI is missing the point in the best case and criminal action in the worst.

AI is snake oil with a little genuine aspirin.

The fact that it is the Saudis and anti-democracy billionaires like Larry Ellison funding AI initiatives, tells you all you need to know about the motives behind the money.

Ending any democracy that tries to dump their fossil fuel overlords. That's the motive.

Republicans & Tories have 3 industries funding them: oil & gas, casinos, and finance.

https://www.cnbc.com/2018/04/07/heres-a-look-at-who.html

https://www.washingtonpost.com/politics/2022/05/20/larry-ellison-oracle-trump-election-challenges/

I have a feeling this is too biased towards ChatGPT and the related generative AI scopes. And in that context, I completely agree.

But #AI is much more than generative AI. Using AI for analysing huge amounts of data within a restricted scope. For example, medical use cases (like detecting cancer, fractures, etc from images) - that can be pretty valuable. But in these cases, the findings from the AI tools are being reviewed by doctors too.

Another use case is within engineering. Feed the AI engine with data related to a challenge you need to solve and get a suggestion for a solution ... Like what LEAP-71 did being able to create a prototype rocket engine with up-to-par performance ... https://leap71.com/2024/06/18/leap-71-hot-fires-3d-printed-liquid-fuel-rocket-engine-designed-through-noyron-computational-model/

Within IT/Internet security, AI can be helpful to detect attack patterns quicker - and can alert OPS personell quicker to evaluate counter measures before the attacks manages to scale up and become a bigger problem.

We need to get past the "AI hype phase" where "AI" is only referred to as "generative AI". I hope the capabilities of AI as a tool, when applied correctly to a challenge at hand, can get the main focus in the near future.

Now AI is described as a hammer - and people see every challenge as a nail. But AI is a toolbox, with different tools for different types of challenges.

@mcfly For me, the biggest criticism of current (mostly) industry-led #AI development, is the environmental impact of such technology. We could then broaden that discussion right up to #sustainability in its various forms: not only environmental, but also societal, ethical, etc.

Then, and following up right after those, we have plenty of ethical concerns more than worthy of our discussion.

1/

@mcfly s/AI/GaaS/

(GIGO As A Service)

@mcfly Kill it with 🔥!

Or legislation whichever is easier.

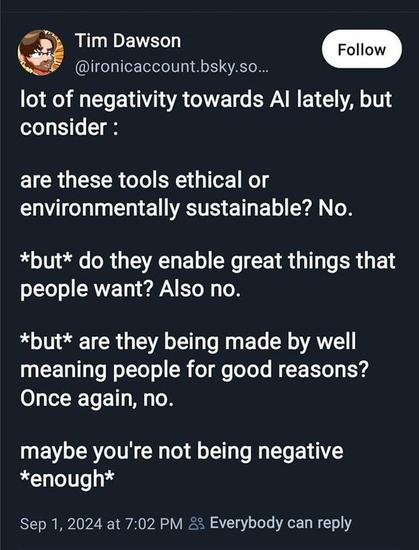

Generative AI of which Dawson rages, is a teeny, tiny portion of AI. The "AI" (LLMs) for which he ranges is a small portion of the usages of Generative AI.

I can't say he is wrong about the subset of the subset for which he has disdain.

However, if I have been mistaken and he is against all AI. He needs to take his phone and his laptop and throw them in the garbage because they require AI to be functional in today's internet and cell phone system