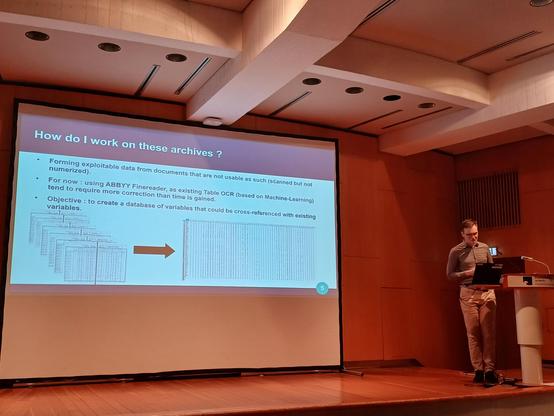

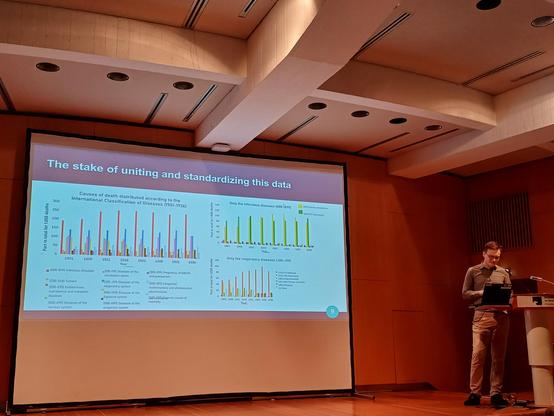

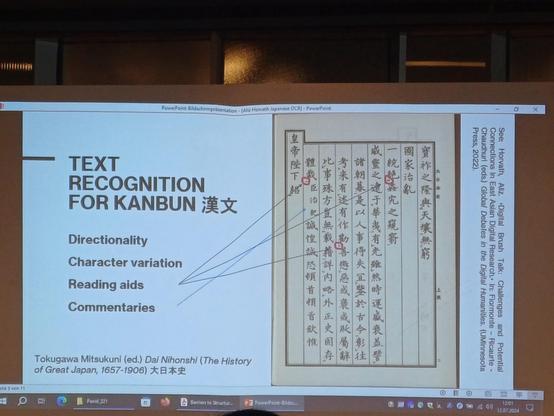

Next, Anatole Bernet on how #DigitalHumanities tools help understand Imperial Japan's swift transformation of medical science, as visible in the annual reports by the Sanitary Bureau - a mine of information and statistics so extensive that computational analytics (OCR, table detection, results visualisation) necessary to look beyond its annual framing, and that contextual analysis can then help understand the patterns.

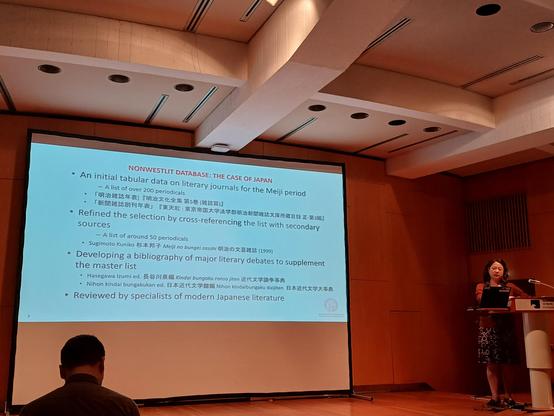

#DigitalHumanities tools help to understand the discourse on enlightenment and innovation in these journals, and overcome access, scan quality, copyright restrictions, and Japanese language-related issues.

#ChartingDSEA

#ChartingDSEA

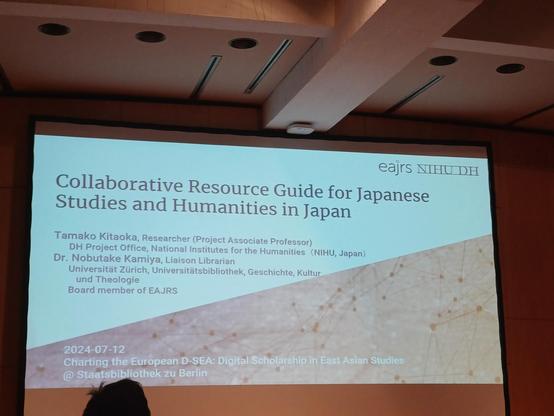

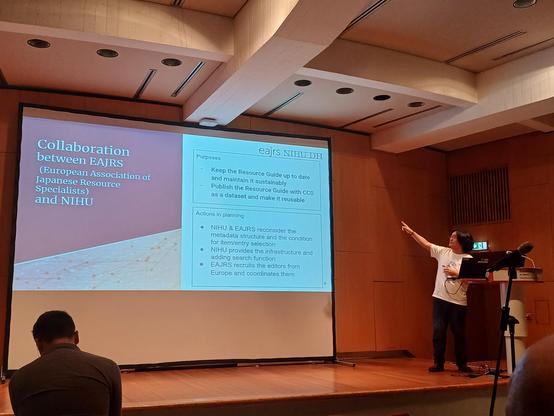

And concluding this panel, Nobutake Kamiya and

Tamako Kitaoka ask how students, researchers and teachers can find and use their desired information among vast collections of Japanese resources. They propose the 'Collaborative Resource Guide for Japanese Studies and Humanities in Japan', and highlight the collaboration necessary to maintain such infrastructure and update the research guide, at #EAJRS and the National Institutes for the Humanities.

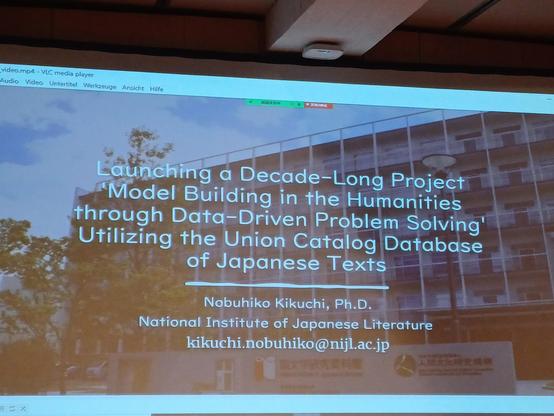

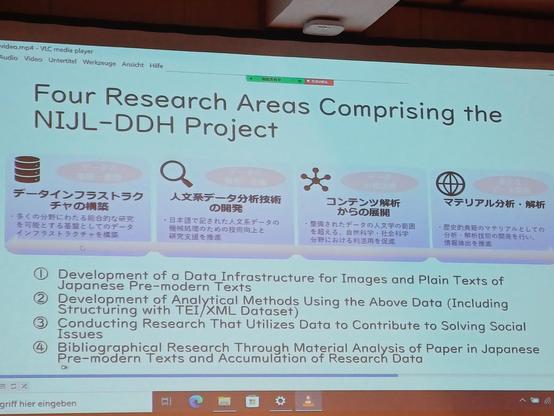

He urges: datasets need to become reusable (XML TEI), not constantly recreated - and models to be published with code!

#ChartingDSEA

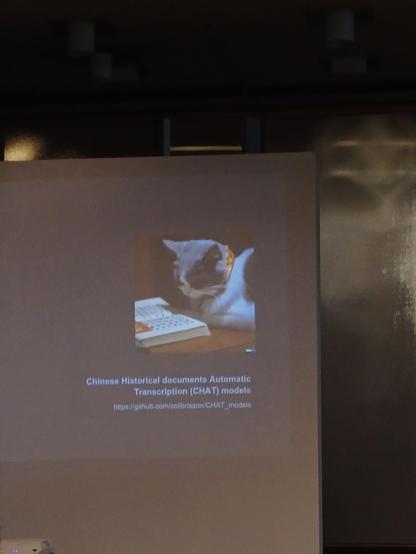

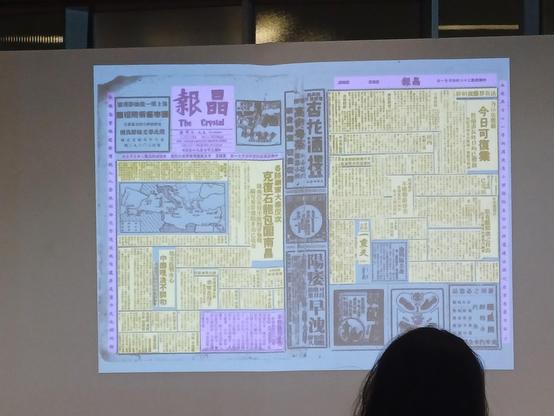

And the last speaker on the #ChartingDSEA OCR panel: Matthias Arnold on: "Towards fulltext of Republican Chinese newspapers" - how can segmentation work in complex newspaper layouts? Crowdsourcing, machine learning, annotation, OCR classification as processes forbrecognising registers and contents.

~ "Ground truths" essential for working with neural networks! ~