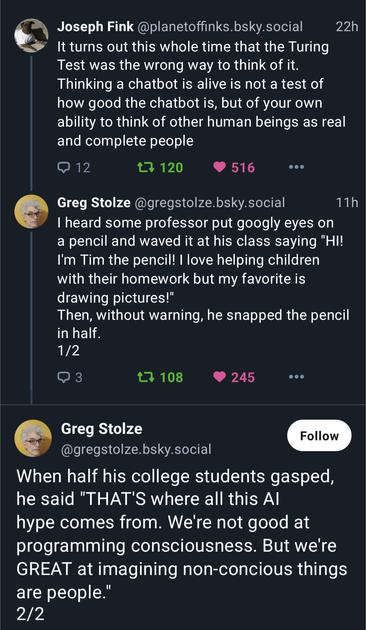

Conversely, we're also very good at imagining/constructing conscious people and entities as non-conscious/less-conscious, making it very easy to abjectify large numbers of people because our imaginary conception of their existence is separated and devalued from our own. That's often how marginalisation happens.

Yeah, this is a good point.

I've heard it argued that this is a common theme in transphobia and other fascist-adjacent movements. Transphobes love unborn foetuses and very young children because they can imagine them as being virtuous upholders of any values they project onto them. However, as soon as that child gets old enough to be able to express their own feelings, then those feelings will probably be different from what was projected onto them, and the transphobe reacts with horror that their child has been "stolen from them."

AIUI, the Turing test came out of Alan Turing worrying that these computation devices being built might become sentient (not unreasonable, given what was known at the time). The crux of the test is that if it passes, we *must* give it human rights unless we can prove it's not sentient.

So when an "AI" company tries to create general AI and then sell it, they are in fact trying to recreate slavery.

@pawsplay while he is right...

... poor Tim. He only wanted to be helpful.

@pawsplay That incident with the professor comes from the first episode of the TV series "Community", back in 2011:

Community - A Pencil Named Steve

@pawsplay we're great at projecting our own humanity and recognizing its reflection

generally we're poor at recognizing and accepting the humanity of others

if anything, our minds process that as uncanny and "wrong" in the manner of a distorted photo, and compel us to error-check and dismantle everything that fails to reassure us by looking as we expect—to dismiss whatever doesn't resemble the reflection we're used to seeing as, at best, a mistake

inhuman and fictional constructs are easy; we can see ourselves in them just fine, ignore whatever doesn't fit, and we usually don't have to worry about them arguing back

But also Turing test: Every time a machine or animal does something new, we move the goalposts on what it means to be human.

We cope so hard because we just *have* to be special. It's a trip watching that slowly collapse.

Maybe being uncomfortable with reality will be our new Turing test! 😀

I still hate chatbots because right now they are tools of capitalism. But I do get it.

https://wyatt8740.gitlab.io/site/blog/007_008.html#bubblegum-review-1

Look at the many, many photos of power sockets, lawn chairs and washing machines in which we recognise facial expressions. We are absolutely fantastic at recognising ourselves in things that aren't anything like us. At recognising emotions in inanimate objects. At imagining complex motivations in random events.

@pawsplay Daniel Dennett (RIP) calls this "the intentional stance" & he wrote an entire book about it. We take the intentional stance when we ascribe intent & purpose to the behavior of something outside ourselves (Dennett himself takes things a step further & believes that we also apply to ourselves, but that's another story).

"The Mind's Eye" that he edited with Hofstadter contains a couple of chapters that touch deeply on this idea, including a mechanical tortoise that wails when damaged.

A popsicle stick, though, that I can get behind.

Animism is Normative Consciousness - The Emerald

For 98% of human history, 99.9% of our ancestors lived, breathed, and interacted with a world that they saw and felt to be animate. Imbued with lifeforce. Inhabited by and permeated with forces, with which we exist in ongoing relation. This animat...

@pawsplay @mynameistillian @nyrath Yep! GPT-style AI passing the Turing Test sometimes doesn't mean that GPT-style AI is sapient and worthy of personhood or being anything except a (flawed) tool.

It means that the Turing Test is twaddle.

@pawsplay

Omigosh! That’s awful!

I did a lesson with year 11 students (16-17) where they had to choose a potato out of a bag, look at it, then put it back into a small bag for their group, then retrieve it. Each time, the group sizes increased, it took longer to find their potato, & audible sighs of relief were heard. At the end they were allowed to draw on their potato, & every student chose to give them a face.

This was a mainstream class.

@pawsplay I feel like this is really illustrative of how many people feel regarding "AI";

Kind of reminds me of the Google LaMDA incident