If you push it, it gives a masterclass in how to be right, and then wrong, and then right for the wrong reasons …

I especially like this bit:

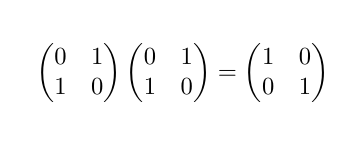

“However, there's a special case for matrices with a determinant of 0. If the off-diagonal elements are all equal to 1, the inverse still exists and is simply the original matrix itself.”

Absolutely! But the boosters are in denial about that, and churn out endless excuses for the LLMs: “Humans make mistakes too!” “You gave it the wrong prompt!” “This will all be fixed in the next iteration!”

@gregeganSF @ProfKinyon I think people probably will discover some good uses for this technology. But... not this.

The output still sounds like a student who paid enough attention in class to learn the terminology but didn't do any of the homework.

A useful exercise when asking LLMs any fact-based question is to reply with something like "That is wrong. Please give the correct answer." (Saying "please" may be more correlated with training data that complies with the request, while using rude language may generate an argument.) Quite often the LLM will "admit" or "apologize" for the wrong (or right!) answer and give a different one.

@gregeganSF @mattmcirvin @ProfKinyon They call it "hallucinations" which makes it sound like a glitch that just shows up now and then, rather than an LLM's core function. Their one job is "If a response to this prompt appeared in your training data, guess what it would be". It's the same algorithm that produces correct answers and hallucinations.

And (with the caveat that introspection does not tell us how the brain really works): that's not how I think when I understand something. That's how I think when I'm bluffing, when I have to write about something I don't understand.

@robinadams @gregeganSF @ProfKinyon It's possible that you could train this kind of neural network to do better, but it wouldn't be via the "LLM" route of just letting it loose on a giant corpus of data. You might have to actually teach it, like a human--give it some kind of lived experience.

I am not recommending that anyone try this, mind you. But I don't think there will be a lot of effort put into it by the people who are funding this stuff, anyway, because it makes the whole process labor-intensive and obviously unprofitable. We have enough trouble educating humans.

And maybe that wouldn't even work, because it seems like LLMs only get as good as they are by being exposed to a larger corpus than a human being ever encounters. Which implies to me that they're not inherently as good at learning as we are (probably not a surprise, we have an evolutionary head start of many millions of years).

Deepmind trained a similar type of model with only correct proofs in Euclidean geometry in the training data (which they generated randomly), and it became quite good at suggesting auxiliary constructions in geometry

https://www.nature.com/articles/s41586-023-06747-5

This is not entirely relevant as it doesn't do full mathematical reasoning but I think it shows that "all the text in the internet" might not be the best data source for all uses.

@gregeganSF @ProfKinyon Asking ChatGPT to multiply two three-digit numbers and show its working is a fun one. It will very often get the answer right; first and last couple of digits are easy to do via pattern learning. But the working along the way will be nonsense that could never have produced the correct answer.

Useful as a way to show people that just because LLMs can pretend to show how they got to an answer doesn't mean that's actually what they did.

I think this property is true for upper or lower trirangular matrices because there the det is the product of the eigenvalues if my mind serves me correctly.

@ProfKinyon

It's basically a Markov process on tokens. The theory is from Charles Dodgson: take care of the sounds, and the sense will take care of itself. Suitable for giving financial advice, for example.

Shannon wrote about this in 1948, and gave examples. Lacking a large database, or the computer power to work with it, he randomly took words out of books on his shelves to get the right transition probabilities.

The LLM will play chess too. Not legally, but according to its own lights.

I think AI will be good in domains where some small error is tolerable. Math is not one of those domains, unless one is dealing with just numerical approximations in computations.

Maybe no "other" matrix, that is.