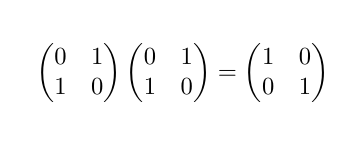

"A matrix with zeros on the diagonal and positive numbers elsewhere is called a zero-diagonal matrix. Zero-diagonal matrices are not invertible. This means that there is no matrix that can be multiplied by the zero-diagonal matrix to produce the identity matrix." -- Google's AI

@ProfKinyon Good grief, how does it churn out this nonsense? I really doubt there was anything quite so horribly wrong in its training data, but I guess it must be taking a correct discussion of the non-invertibility of diagonalised matrices with at least one zero on the diagonal and garbling it, while blending the result into something smoothly grammatical, utterly confident, and totally wrong.

@gregeganSF @ProfKinyon I think this kind of language model is simply incapable of capturing the logical connections involved in doing real mathematics. Wrong arguments can sound as plausible as correct ones, to the level of plausibility it's capable of achieving.

Absolutely! But the boosters are in denial about that, and churn out endless excuses for the LLMs: “Humans make mistakes too!” “You gave it the wrong prompt!” “This will all be fixed in the next iteration!”

@gregeganSF @ProfKinyon I think people probably will discover some good uses for this technology. But... not this.

The output still sounds like a student who paid enough attention in class to learn the terminology but didn't do any of the homework.

@mattmcirvin @gregeganSF @ProfKinyon I think this is currently the perfect sort of example of why you can't trust any output of an LLM to be right -- they're good at right sounding answers but not good at actually being able to determine if they're right or have made it up completely.